Apple called the new iPhones‘ A12 Bionic chip “the smartest and most powerful chip ever in a smartphone.” And despite the company’s occasional hyperbole and frequent marketing wizardry, it’s not kidding around.

Here’s why the A12 is so exciting — and what that means for Apple’s Core ML machine learning platform.

The A12 Bionic is a powerhouse of a chip

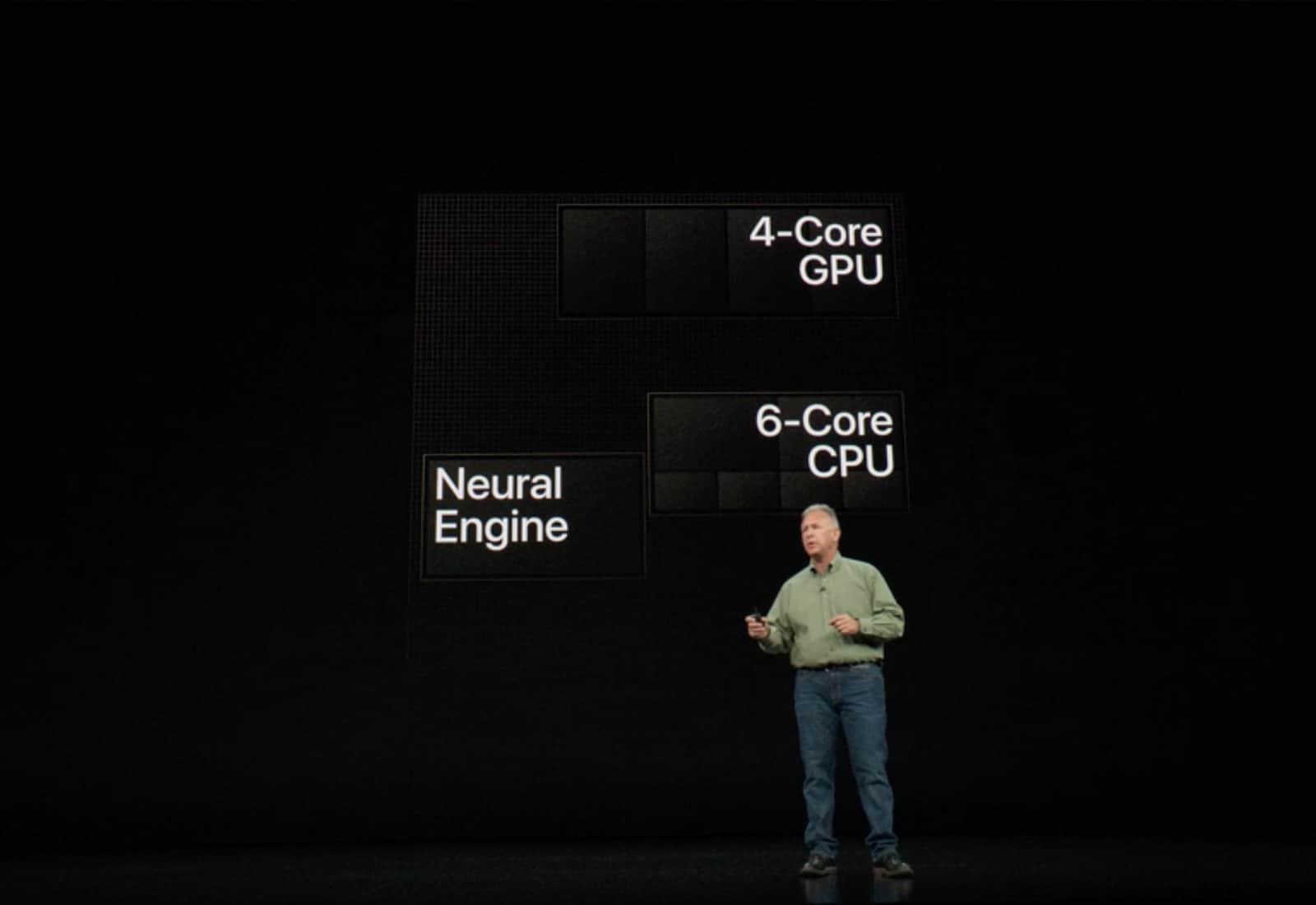

Apple’s new A12 Bionic chip is a massive upgrade. To call it a powerhouse would be an understatement. It boasts a six-core CPU, with two of these dedicated to performance and four to efficiency. There’s also a four-core GPU, which makes it 50 percent more powerful than the one in last year’s A11.

Last, but certainly not least, there’s the Neural Engine. This is the part of the chip that’s made purely with artificial intelligence in mind. Apple having a dedicated chip core for running artificial neural networks isn’t new, of course. Last year, Apple introduced the first Neural Engine on the iPhone X, iPhone 8 and iPhone 8 Plus. This chip helps power the Face ID facial recognition software, as well as the computationally impressive Animojis.

A few things make this year’s upgrade monumental, however. First of all there’s the sheer power of the unit. Last year’s Neural Engine boasted two cores and was able to run a massive 600 billion operations every single second. This year’s version? Try eight cores and 5 trillion operations per second.

This may be the result of the smaller 7-nanometer process used to make the A12 chips, compared to last year’s 10-nanometer process. Whether Apple or Huawei can truly claim the first 7nm chip is up for debate, since Huawei showed off its 7nm Kirin 980 last month. However, Apple will ship the new iPhones first. And, with 6.9 billion transistors packed into a tiny chip, it’s an astonishing feat.

There’s also a new “smart compute system.” It ensures the chip can work out which part of the processor (CPU, GPU or Neural Engine) should handle a particular task.

iPhone XS Neural Engine: Better, faster, stronger

Photo: Apple

So how will an iPhone XS owner notice this new, improved Neural Engine? The most immediate way is overall speed. Face ID didn’t exactly lag with the iPhone X, but it will work even faster on the next-gen iPhones.

But the most significant impact won’t be seen on Day One. This will come about as a result of the new third-party apps made possible by the combination of the A12’s Neural Engine and Apple’s developer-focused machine learning platform, Core ML.

Core ML was introduced with iOS 11 with the goal of allowing apps to run machine learning models locally on devices, rather than having to send them off for processing on a remote server.

According to Apple, the new A12 Bionic chip can run Core ML nine times faster, while using one-tenth the energy. That means much faster, more intensive number-crunching AI apps — that don’t use nearly as many iPhone resources in the process.

Better camera apps for iPhone photography will be an obvious use case. But that’s not the only illustration of how this chip will be used. At yesterday’s event, Apple showed off HomeCourt, a basketball app that can track six different metrics of a player’s performance in real time. Apps such as this will be able to handle more information and present it to you faster. We’re expecting some mind-blowing augmented reality apps to come out of this, too.

Photo: Apple

AI competition heats up

Apple is far from the only tech company investing heavily in artificial intelligence. Huawei has developed its own AI chips for smartphones, and Samsung is busy beavering away on its own dedicated AI chip, too. Google’s 2017 Pixel 2 smartphone boasted its own Intel-made chip for use in neural network-aided image processing.

But considering that just a few years ago, Apple was languishing behind the pack in AI, it’s truly astonishing. As exciting as it was to see new devices like the Apple Watch Series 4 at yesterday’s Apple event, I’m most excited about seeing what the A12 Bionic has up its sleeve. And what that’s going to mean for developers (and the rest of us!) over the next 12 months.