iOS 14’s built-in pornographic content blocker stymies searches that include the word “Asian,” according to a computer science student.

This means searches for “Asian food” or “Asian countries” are blocked if the adult content filters are enabled. Similar blocks aren’t in place for search terms including “black,” “white,” “Arab,” “French” and other national or racial descriptors.

Steven Shen, a computer science student at Indiana’s Purdue University, noted the trend on Twitter.

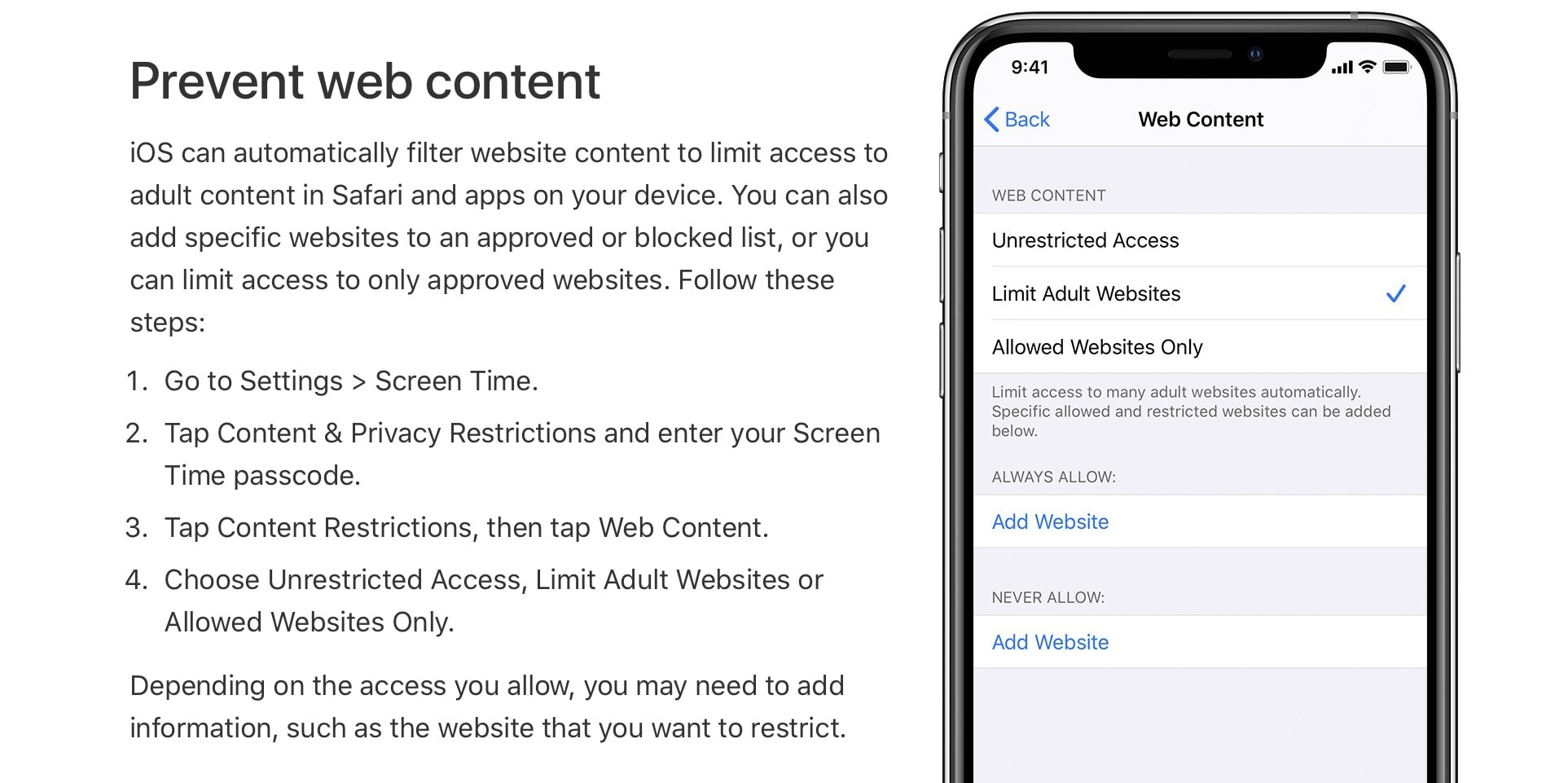

“On iOS, if you turn on ‘Limit Adult Website’ under Screen Time->Content Restrictions, Safari blocks any website URL containing the word ‘asian’,” Shen wrote. “Seriously, go try it, it’s unbelievable. I filed a Feeback a long time ago. Nothing changed.”

A similar, optional adult content blocker is available on Mac. However, on Apple’s desktop hardware the word “Asian” remains searchable after activation of the content blocker.

On iOS, if you turn on “Limit Adult Website” under Screen Time->Content Restrictions, Safari blocks any website URL containing the word “asian”. Seriously, go try it, it’s unbelievable. I filed a Feeback a long time ago. Nothing changed. Please RT for visibility. @AppleSupport

— Steven Shen(沈畅) (@Stevenpotato) February 3, 2021

Unlikely to be a human error

In an interview with the The Independent, Shen said the block was unlikely to be human-coded. Instead, it is most likely an AI that is scouting words frequently associated with pornographic searches. To that end, the iOS content blocker also squelches terms like “teen,” “amateur” and “mature.” That’s despite the fact that they have legitimate usage outside of adult content.

Apple’s competitors face this type of challenge when it comes to AI as well. Google, for example, encountered problems with image-classification algorithms that misidentified photos of Black people as gorillas. Microsoft, meanwhile, launched a chatbot called Tay, which online users quickly “trained” to behave in a racially insensitive manner.

If Shen is correct, Apple did not task a human coder to come up with a list of blocked terms. It’s all done on dataset correlations which, it could be argued, reflect broader discriminatory societal trends and attitudes. (If you’re interested in this topic, I really recommend reading Algorithms of Oppression by Safiya Noble.)

Apple CEO Tim Cook frequently speaks out against racism and about the importance of diversity. It seems highly likely that, now that this iOS glitch has been spotted and publicized, it will be eliminated in short order. Still, it’s a lesson about best practices when it comes to AI.