Photos already has a pretty decent search function on iOS 11. Thanks to Apple’s machine-learning tech, and AI categorization, you can search for thousands of “scenes.” These include the places you took the photo, but also anything from abacus to zucchini, people in the images, and times the images were taken.

This has gotten even better in iOS 12. You can still search on many thousands of categories and keywords, but now you can combine searches. For instance, you could search for several different people, and see photos only containing them all. OR you can combine search terms like Christmas, Food, and 2015, for instance. Let’s take a look.

Find people, places and things with Photos search

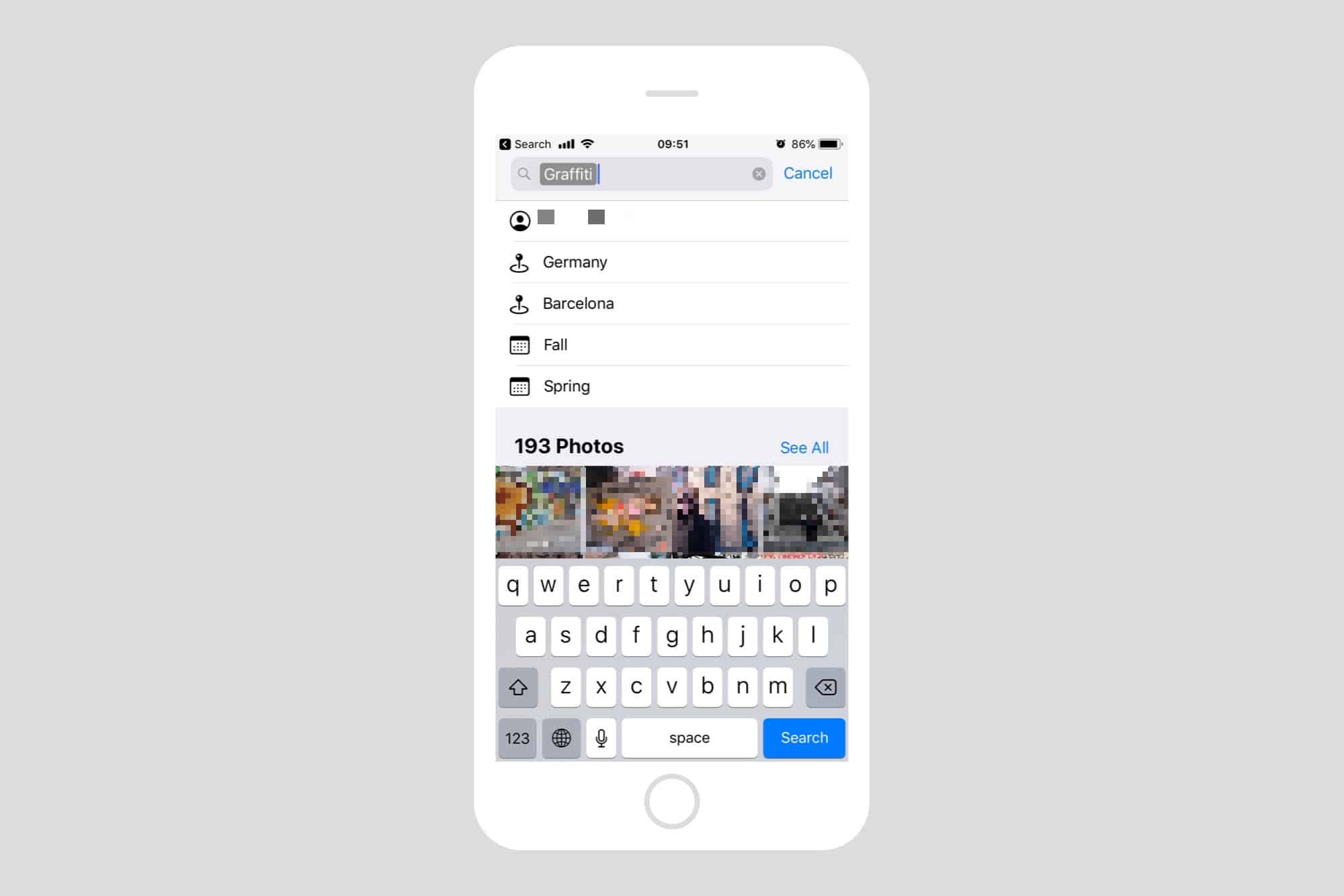

The best way to explain how the new search works is to see it in action. It’s super-intuitive. Just like in iOS 11, you type your search into the search box inside the Photos app. The difference is, now those search terms become tokens. Let’s play along. First, type whatever you’re searching for into the search box. I’ll pick graffiti, but you can pick anything. As you type, you’ll see search suggestions appear in a list below the search box. You’ll also see the the search results are already showing below that. More on that later.

Tap one of the suggested search terms, and it will turn into a token inside the search box. You can tell it’s a token because it now has a gray box around it.

Now, you’ll see further suggestions, based on the results:

Photo: Cult of Mac

To view all your photos containing graffiti, just tap See All. Or you can narrow the results by tapping the suggested search tokens in the list. As you can see, these are time-based (Fall, Spring), location-based, or people. People are pulled in from the Faces albums, where iOS uses facial recognition to group photos by person.

To use any of these tokens, just tap as many as you want. The list of suggested tokens will update as you do so — for instance, after you tap a place, more people may be suggested. Or perhaps after you pick a town or city, the list will switch to more specific locations within that city.

Create your own Photos searches

Tapping the suggested search tokens is a quick way to get where you want, and because iOS 12 only suggests tokens that have corresponding photos, you’ll never be let with a blank list, showing no results.

Photo: Cult of Mac

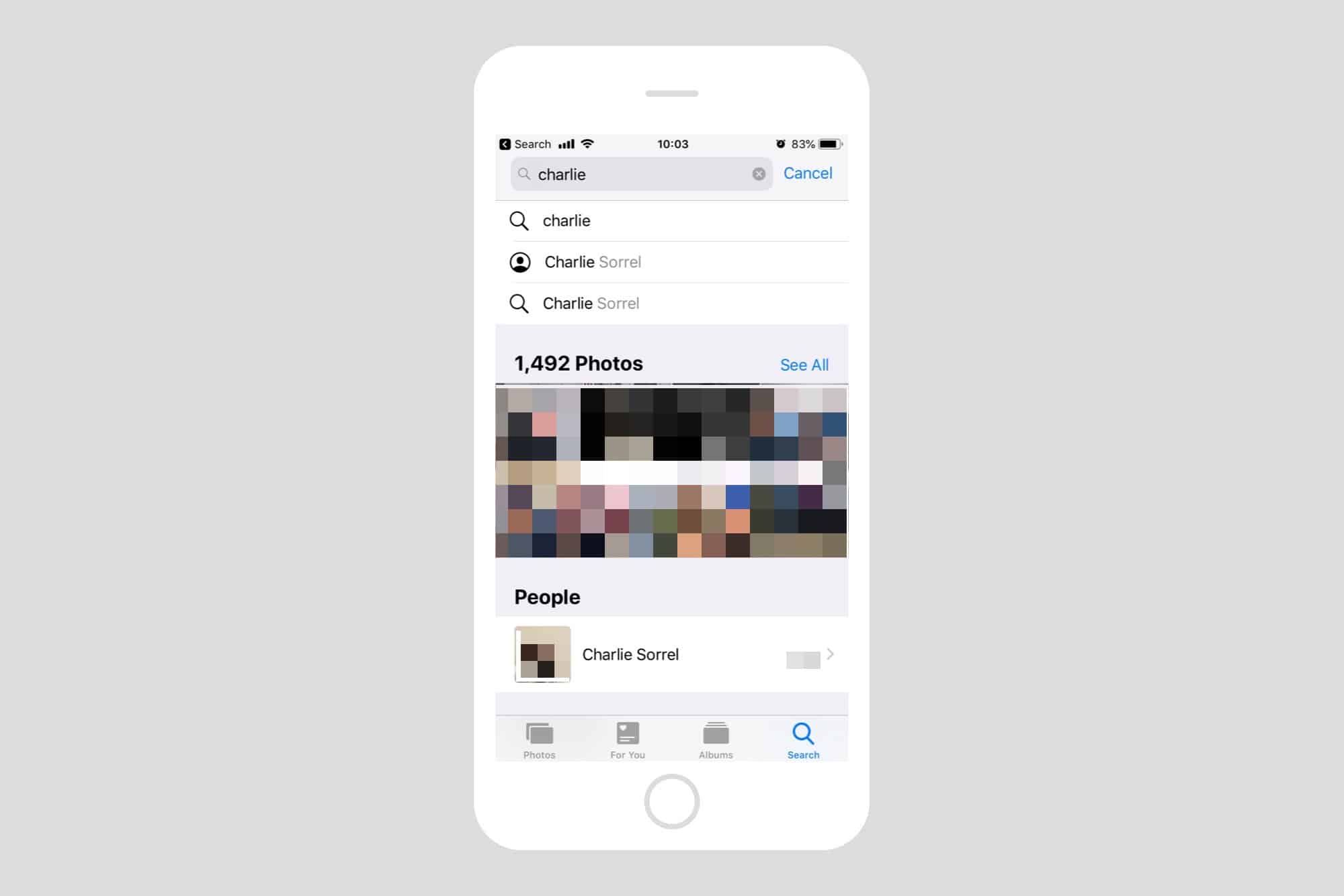

But you don’t have to combine suggested tokens. You can also keep typing in the search field to add your own. For instance, I could find photos of me and my mother by first typing Charlie, then tapping on my name when it appears in the list. Then, I type my mother’s name, or Mom, or however I might have it set up in the Faces section of Photos.

Instantly, Photos returns images which have both of us in them. And you can further narrow down the search by adding places, or other keywords. Want to see that photo where your weird uncle showed that giant zucchini to your cousins, at sunset? You can search on their names, plus zucchini, plus Sunset Sunrise, and you’ll find it.

Amazing, instant results

Results are broken into sections, depending on what iOS 12 finds. At the top of the list is always the See All section, but below that you will find Moments, and Categories. The Moments results are great, because they place the photos in contact of other photos taken around the same time, or in the same place. It’s the ultimate shoebox experience, the digital equivalent of opening up a shoebox of real photographs, and getting lost in the stream of memories.

Master categories

Photo: Cult of Mac

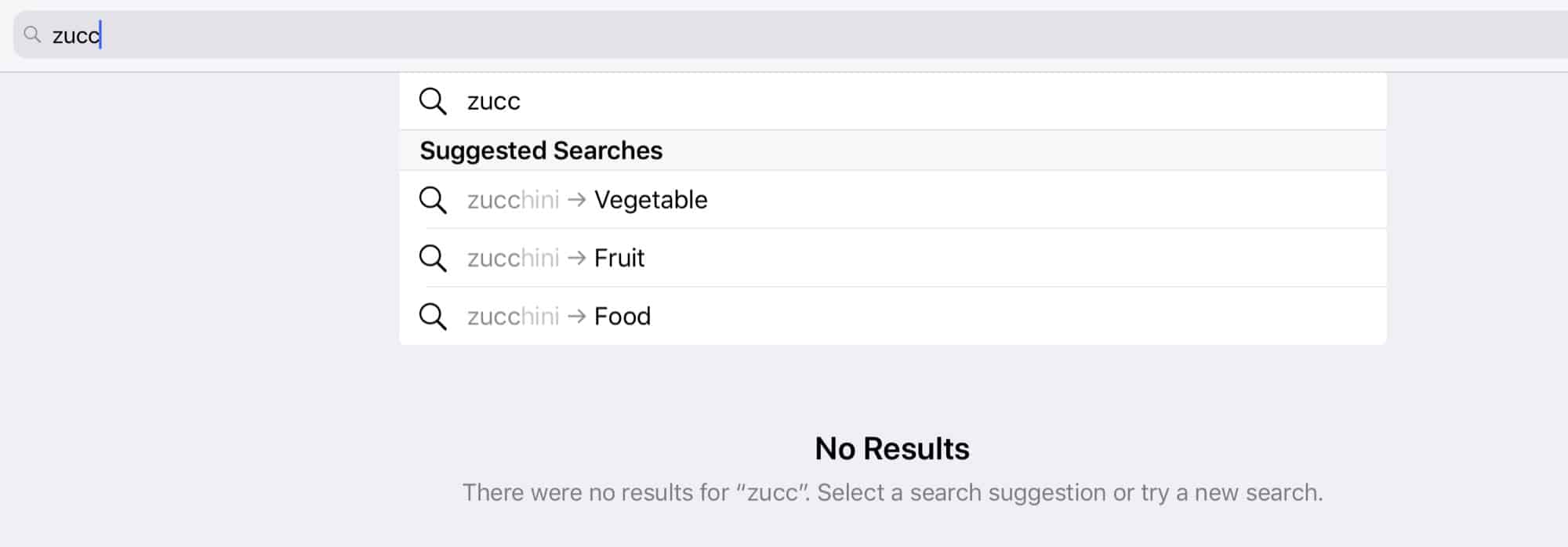

One neat feature worth a mentioning is that hierarchies are now exposed to you, the user. A hierarchy is something like Vegetable > Zucchini, and it seems to appear in the search results list whenever a clarification is required for the search.

For instance, in the above example, Photos seems to know what a zucchini is, but there are no results in my library, so it is offering me the option to search for all fruits or vegetable instead, or even just food. This is an elegant solution which recognizes that the app’s AI isn’t perfect. Maybe there are some photos of zucchini in my library, and Photos just hasn’t spotted them. By offering to show me all the veggie photos, it’s using its AI to narrow the search for me. That’s pretty clever.

FWIW, there are no zucchini photos in my library. There are, however, several cabbages, and the Photos app didn’t spot those either. It did successfully offer me the option to search vegetables, fruits, and melons (!) to find them, though.

The new photo search in iOS 12 is not only way more powerful, but also a lot easier to use, which is a rare combination. I use Photos’ search features every day, and it’s pretty awesome. You can even search your photos using Siri.