Today, your iPhone is a gadget, a mere consumer appliance. But your future iPhone will become increasingly human. You’ll have conversations with it. The phone will make decisions, prioritize the information it presents to you, and take action on your behalf — rescheduling meetings, buying movie tickets, making reservations and much more.

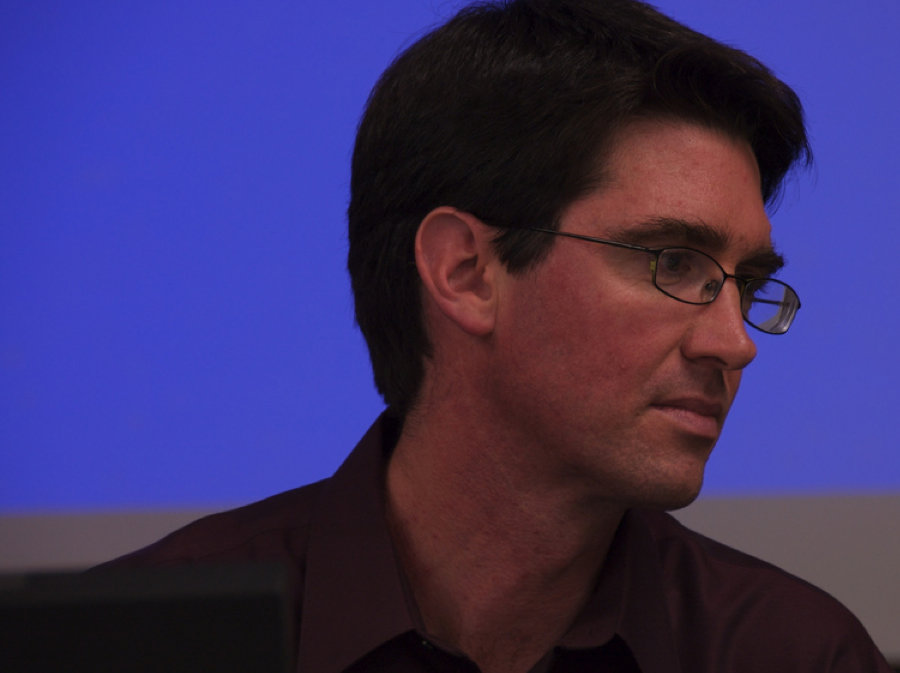

In short, your iPhone is evolving into a personal assistant that thinks, learns and acts. And it’s all happening sooner than you think, thanks to the guy pictured above.

The history of interface design can be oversimplified as the application of increasing compute power to make computers work harder for human compatibility.

In the beginning, humans did all the work because computers weren’t smart enough to handle human language. Code had to be punched in. Output had to be translated.

When computers became more powerful, they could interact with users with something similar to human language via a command line interface. Later, PCs and workstations were re-imagined to handle icons, windows, trash cans and dragging and dropping and all the rest.

As Moore’s law continues to deliver ever more powerful processors at lower cost, that available power can be applied to the creation of even more human-compatible interfaces that include touch, “physics,” gestures and more.

Ultimately, however, human beings are hard-wired to communicate with other people, not computers. And that’s why the direction of interface design is always heading for the creation of artificial humans.

There are four elements to a machine that can function like a person: 1) speech; 2) decision-making algorithms; 3) data; and 4) “agency,” the ability to act in the world on your behalf.

Apple already has rudimentary technology and partnerships to achieve all this. The initial launch of the iPhone 5 will likely offer a small sampling of this technology, and subsequent releases of iOS will dribble out more. Eventually, your iPhone will function as a virtual human that you can talk to, that suggests things to you, that you can send running on errands.

When you get your shiny new iPhone 5, you’ll notice a new feature called the Assistant. This feature won’t be an app, but a broad capability of the phone that combines speech, decision-making, data and agency to simulate a virtual human that lives in your pocket.

For speech, Apple has maintained a long-standing partnership with the leading company. A version of iOS 5 with Nuance Dictation has reportedly been sent out to carriers for testing.

For decision-making algorithms, Apple can rely on the amazing technology it purchased in April, 2010, when it bought Siri, a company that created a personal-assistant application that you talk to, and it figures out what you want.

Many iPhone users don’t know this, but Siri is still available free in the app store. You should be using this every day. You talk to Siri in your own words, and Siri’s algorithms figure out what you mean. It sorts through a large number of possible responses, and chooses the best one with uncanny accuracy.

For data, Apple can use your own behavior with the phone, which can inform the system where you are, where you’ve been, what your preferences are, what your schedule is, who you know, and much more. Solid rumors suggest deep integration of the Assistant with Calendar, Contacts, E-mail and more.

And for agency, Apple can rely on the technology and partnerships developed under the Siri project. The existing Siri app leverages partnerships with OpenTable, CitySearch, Yelp, YahooLocal, ReserveTravel, Localeze, Eventful, StubHub, LiveKick, MovieTickets, RottenTomatoes, True Knowledge, Bing Answers and Wolfram Alpha.

These services both provide judgement (you can say, “find me a GOOD Mexican restaurant”) and agency (you can say, “make me a reservation.”) Siri is designed to actually book you a hotel reservation, buy movie or concert tickets and much more.

There is absolutely no question that Apple is getting into the virtual human racket, starting with the iPhone 5 and iOS 5. But Apple is a consumer electronics company. What the hell does Apple know about artificial intelligence?

The answer may shock you.

The iOS ‘Assistant’ is US Military Technology

The most expensive, ambitious and far-reaching attempt to create a virtual human assistant was initiated in 2003 by the Pentagon’s research arm, DARPA (the organization that brought us the Internet, GPS and other deadly weapons).

The project was called CALO, for “Cognitive Assistant that Learns and Organizes,” and involved some 300 of the world’s top researchers.

CALO’S mission, according to the Wikipedia, was to build “a new generation of cognitive assistants that can reason, learn from experience, be told what to do, explain what they are doing, reflect on their experience, and respond robustly to surprise.”

All this research was orchestrated by a Silicon Valley company called the Stanford Research Institute (SRI). The man in charge of the whole project was a brilliant polymath who worked as senior scientist and co-director of the Computer Human Interaction Center at SRI, Adam Cheyer (pictured above).

Cheyer is not only one of the world’s most renowned artificial intelligence scientists, he’s also one of the leading experts in distributed computing, intelligent agents and advanced user interfaces.

Cheyer now works as a director of engineering for Apple’s iPhone group. He also manages the awesome team he assembled for Siri. Together, these brilliant minds are inventing the future of cell phone interaction.

The iPhone 6 Virtual Human

Here’s how your iPhone 6 will probably work. When you want to make a call, search the web or send an e-mail, you’ll just hold the phone to your ear and say a command.

The phone will recognize your voice, which both authenticates your identity and enables the phone to cater specifically to your needs, your data and your vocabulary.

When you want to do the town, you’ll say things like “make me a reservation at a good Italian restaurant.” and “buy me tickets for a good movie for after dinner.” The phone will do your bidding.

Before meetings, your phone with alert you. You’ll listen, and the phone will give you a briefing about the people you’re meeting, with history and personal information so you’re prepared. If you want to reschedule the meeting, the phone software will interact via e-mail with the people you’re meeting with, finding a new time when all are available, and adding the new meeting time to your calendar.

Your iPhone will make suggestions about gifts, books, music and upcoming concerts. It will learn from your actions, getting better over time.

In short, your phone will evolve from a gadget to a virtual human, one you can talk to and that talks back. It will anticipate your needs, make decisions, and act on your behalf.

That’s the iPhone 6. Exactly how much of this virtual assistant technology will show up in the iPhone 5 is still essentially unknown. But what we do know is that Apple is definitely headed in this direction.

Are you ready to carry a virtual human in your pocket?

(Picture of Adam Cheyer courtesy of Tom Gruber.)

[teaser-top]Hix nix stix pix (test)[/teaser-top]

[teaser-featured]Caesar adsum jam forte (test)[/teaser-featured]