Live Text is perhaps the coolest feature in iOS 15, macOS Monterey and iPadOS 15. It pulls the words out of images and lets you paste them into notes, emails, etc.

I’ve tested this on both iPhone and iPad. Here’s why I find it so amazing.

Surprisingly useful

Like so many people, I’ve gotten in the habit of taking a picture of anything I want to remember later. Live Text makes this even more useful. Rather than saving a whole image, I can turn the text in it into a note or a reminder.

For example, while out walking around, you can grab the name of a restaurant you’re interested in right off the sign. Then you can use it to start a web search.

Or suppose the coffee shop owner writes the Wi-Fi password on a board. You can use your iPhone’s camera to quickly grab the text and paste it into your device’s Settings in a couple of seconds to get online.

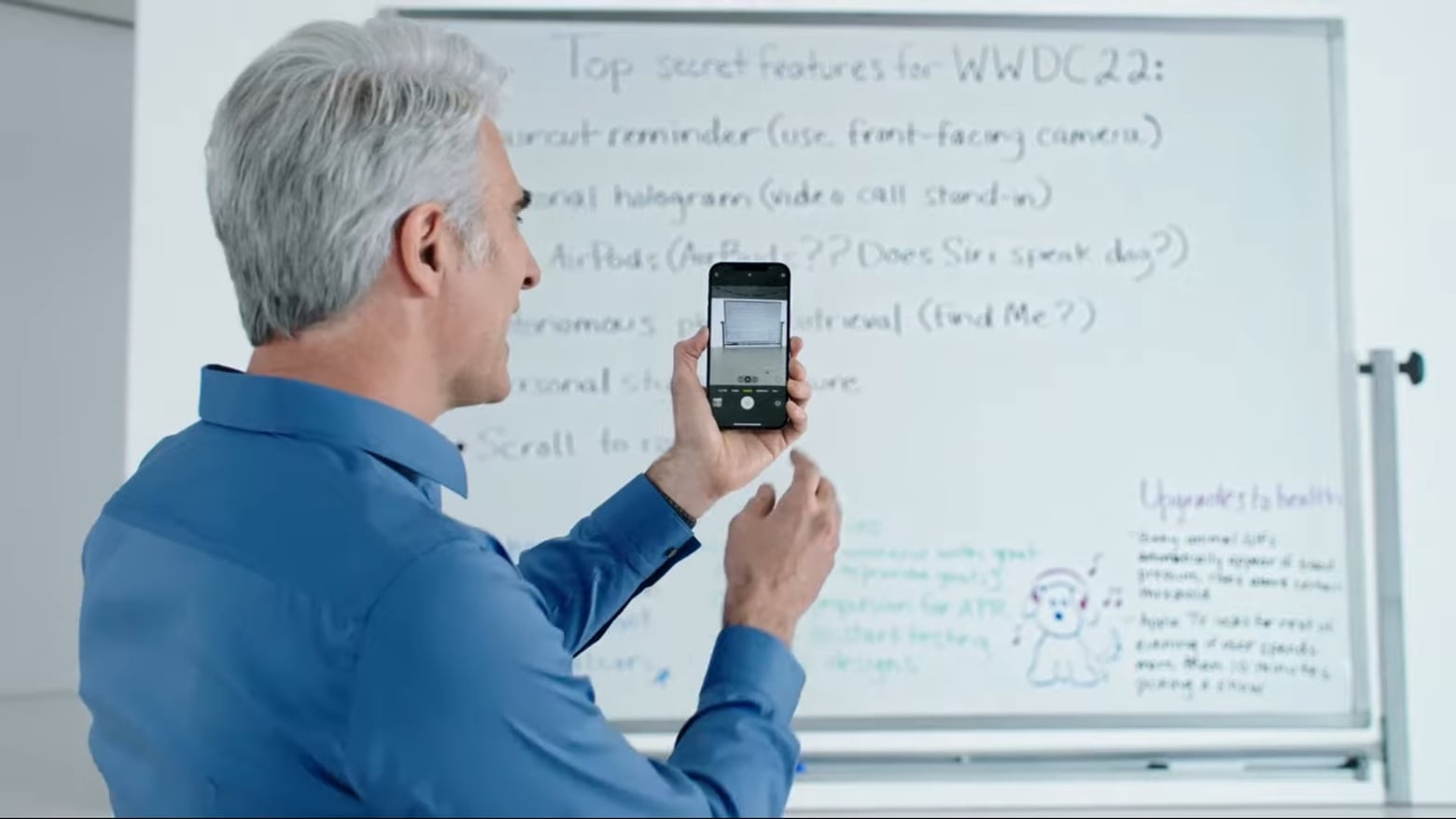

And students can copy everything off a whiteboard as text and paste it into their notes. That should come in handy for people who fill up whiteboards during meetings, too.

How to use Live Text in iOS 15

The best part of Live Text is that it’s so easy to use. There’s essentially no learning curve.

Consider an example. You find a recipe you like in a magazine. Take out your iPhone or iPad, open the built-in Camera app, and act like you’re going to take an image of the text. Wait a second or two, and a small icon will appear in the lower right corner. Tap on it and a pop-up window will appear with the text in it. You can then select the words, sentences, etc., you want to copy.

Photo: Ed Hardy/Cult of Mac

The same process works with images in the Photos app. In this case, all the text will be highlighted in place, on the picture. You can then select the bits you want. Sometimes, the text icon doesn’t show up. In that case, tap and hold on the text you want to select. It’ll get selected unless the characters are too distorted.

And Live Text can often pull words out of images on web pages. In my testing, this option doesn’t work reliably, but I’m using the first betas of iOS 15 and iPadOS 15. There’s plenty of time for Apple to improve Live Text before the final versions launch.

Even at this early stage, Live Text works almost shockingly well. When copying something printed, it often proves 100% accurate. Even in bad conditions, you can usually capture almost all the text.

And it works almost as well with handwriting. Even cursive. The accuracy depends on the text being reasonably clear, but it doesn’t have to be perfect — just not chicken scratch. I tried it with a couple of notes sent to me recently and the results were about 95% correct.

Live Text can translate, too

Live Text is likely to come in handy for travelers because it also ties in with Apple’s translation service. For me, the best option is to take a picture of the menu, then open it and tap and hold on a phrase to select it. A pop-up window gives the option to covert the words to English (or your preferred language.)

I did a bit of playing around with this and it seems reasonably useful. Translations aren’t perfect. But I’ve been in many restaurants in countries where I don’t speak the local language — I know any guide to figuring out what to order is welcome.

LiveText is OCR for everyone, everywhere

Live Text is, of course, just an application of optical character recognition, tech that has been around for decades. But iOS 15, macOS Monterey and iPadOS 15 do it quite well. The new feature is convenient and very quick.

And because this is Apple, the process is done with privacy in mind. The word-recognition function happens on your device. The image is not uploaded to a remote server. That’s good for privacy, but it also means you don’t need a fast internet connection — or any connection at all.

However, you do need a relatively recent computer. You need at least an A12 Bionic processor (introduced in 2018) in your iPhone or iPad. And Live Text only works on M-series Macs, not ones running on Intel chips.

When Apple releases its next operating system updates for iPhone, Mac and iPad later this year, give Live Text a try. I think you’ll be as impressed as I am.