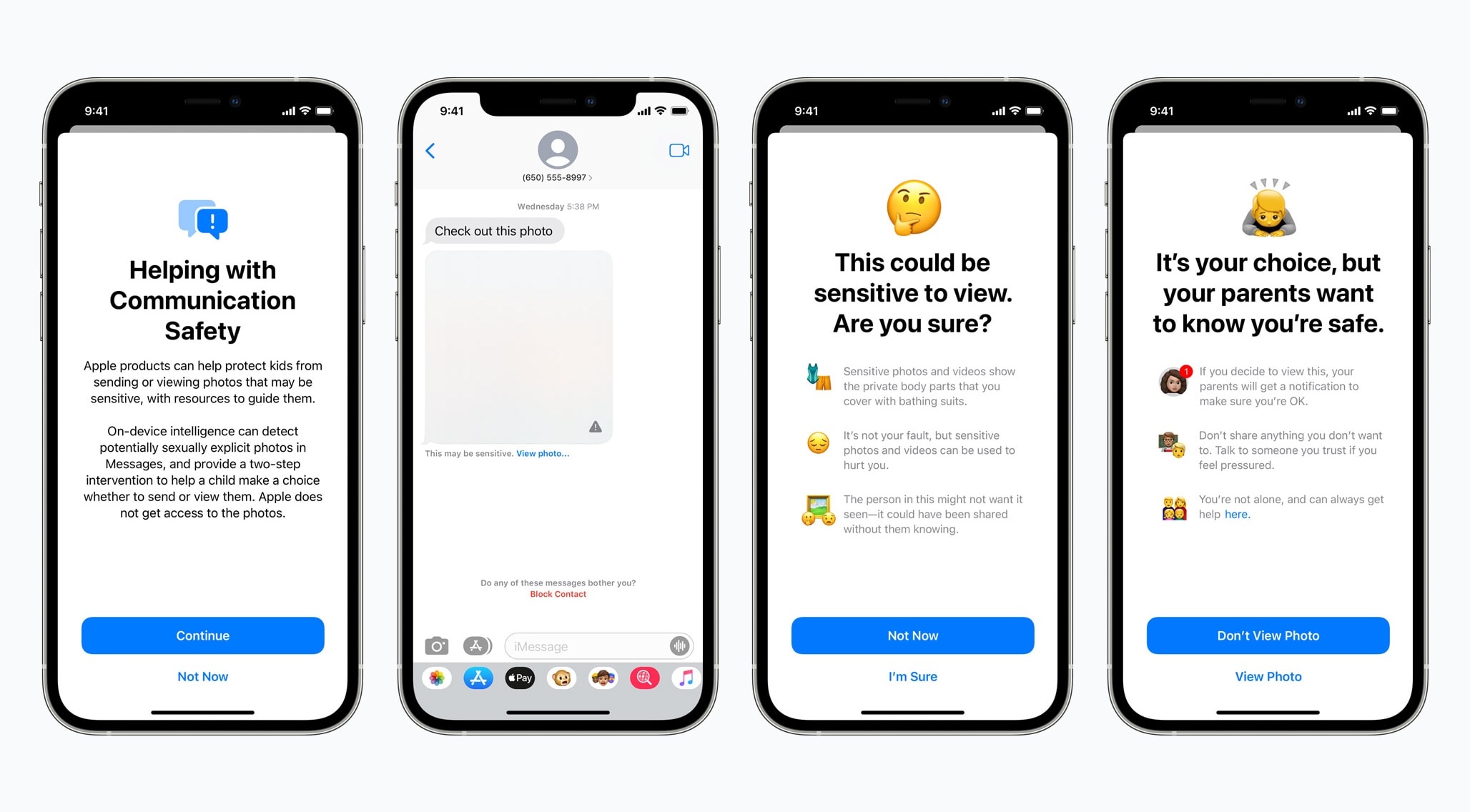

Starting with iOS 15.2, iPhones will be able to detect if an iPhone or iPad user gets or sends a text with sexually explicit photos. The goal is to protect children from sexual predators.

The feature is optional and uses on-device machine learning so that Apple does not have access to the images.

Kids and parents warned of sexually explicit photos

Apple announced in August that it would update the iPhone Messages app so that it can detect sexually explicit photos. The feature first appeared Tuesday in iOS 15.2 Developer Beta 2.

When the feature is fully available, a child that receives a nude photo in the Messages application on an iPhone or iPad will see a blurred-out image. If the child tries to view it, they will receive an alert that asks, “Are you sure?”

The warning is phrased so a child can understand. It says, in part, “Sensitive photos and videos show the private body parts that you cover with bathing suits.” If the child views the image after this, their parents will receive a notification.

A similar process happens if a child attempts to send sexually explicit photos. The child will be warned before the photo is sent, and the parents will receive an alert if the child chooses to send it anyway.

The feature is off by default. It must be activated by a parent or guardian for devices that are part of a Family Sharing plan.

This is not part of the controversial plan to scan photos stored on peoples’ iPhones and on iCloud.