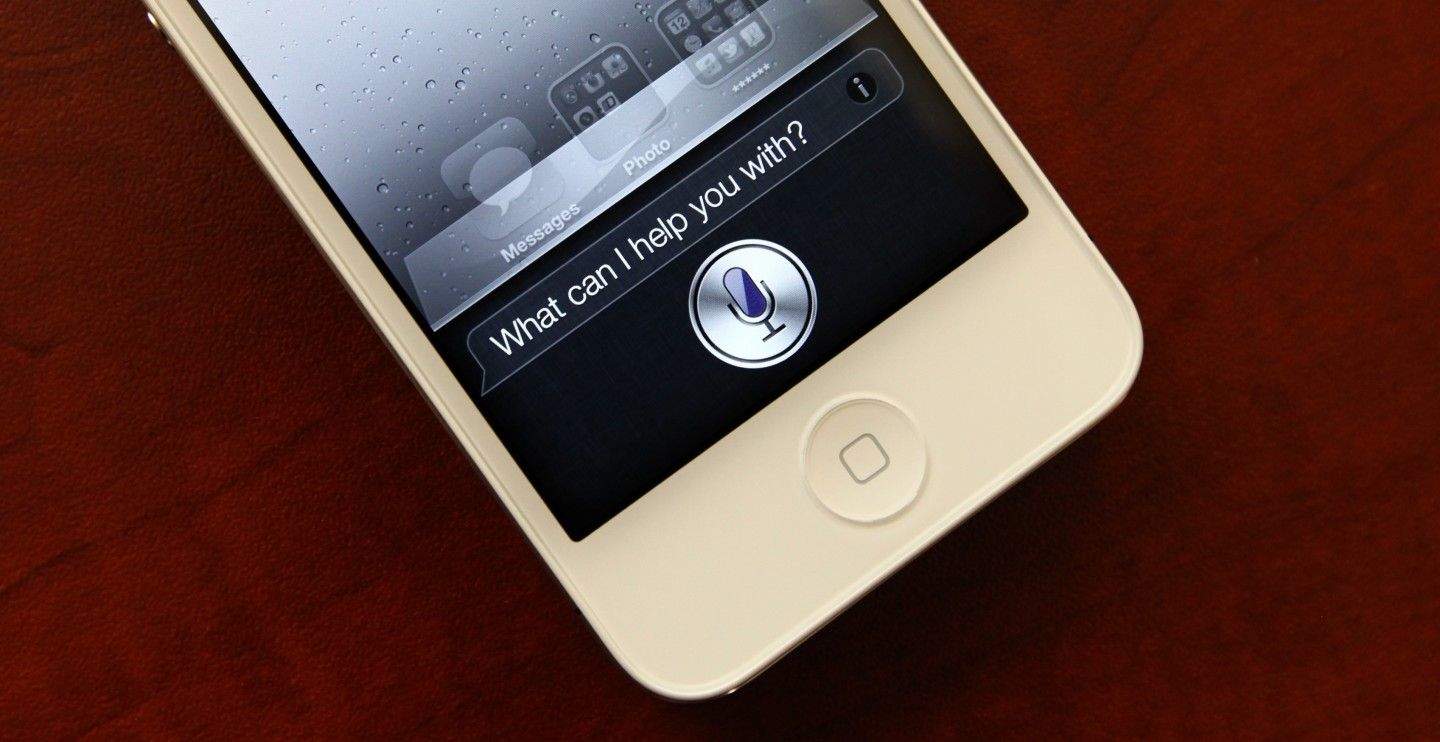

For many people, Siri has been more of a nuisance than an empowering personal assistant since debuting on the iPhone 4s in 2011. Sure, she’s received some upgrades and is getting even more in iOS 8, but fancy new features mean nothing if she can’t understand what you’re saying.

Siri’s favoriting line, “Sorry I didn’t get that,” might soon be a thing of the past though as a report from Wired says the time is ripe for Apple to unleash a neural-net-boosted Siri.

Over the last three years of development, Apple has turned Siri into its own search of sorts. Drawing on third-party sources like Wolfram Alpha, Yelp, Wikipedia, and Shazam. Siri can help with your math homework, find new songs and buy them, tell you sports scores, but understanding what you’re saying could be the biggest upgrade of all.

According to the report, Apple hired Alex Acero to be the senior director in Apple’s Siri group after researching speech technology for 20 years at Microsoft. Apple has also poached top speech recognition talent from Nuance.

“Apple is not hiring only in the managerial level, but hiring also people on the team-leading level and the researcher level,” says Abdel-rahman Mohamed, a postdoctoral researcher at the University of Toronto, who was courted by Apple. “They’re building a very strong team for speech recognition research.

Microsoft, Google have been using neural network algorithms to power Skype and Android Voice Search with noticeably better results. Apple is the only major tech company that hasn’t adopted the technology. Nothing was mentioned at WWDC, but if Microsoft’s head of research is right, Siri could get its new neural network super powers within six months.

Source: Wired

16 responses to “Siri might ditch Nuance so it can finally understand what you’re saying”

Notably missing from 3rd party sources Apple draws on: Google. WTF Apple? Can we give up the petty bullshit and start doing the Right Thing?

The whole point of using a combination of sources like wolfram, yelp, etc, is so Apple doesn’t have to rely on Google. It may sound petty, but google search isn’t great at everything. Using the best third-party source for each category is a better approach than just depending on Google.

Absolutely @BusterH:disqus !!

“Find me the nearest El Fenix”…. EL FENIX not…. oh f’nevermind”

Samsung is rumored to be negotiating to buy Nuance. I suspect that is the real reason Apple is moving quickly to staff up.

this is something years in the making, not a new idea brought on by Samsung looking to scoop up Nuance. Check out the full Wired article for more of the history behind neural networking.

“Apple has also poached top speech recognition talent from Nuance.” What’s the point of buying a company if the brains that got it to where it is is gone.

In 2013 Apple hired Larry Gillick who was the chief speech scientist at the Siri Group. In 2011, Apple hired Norman Winarsky who was one of the co-founders of Siri.

Apple has all the cash need to snap up Nuance. Instead they want to waste it on crap like Beats.

Yeah, I know this post is childish, but considering I hate Nuance with a passion I’ve never felt against any other company, this is great news. I started with Macspeech, which was awful despite the promises. I then stupidly paid for 3 DragonDictate upgrades, none of which worked either. Support was nonexistent. Upgrades always cost a fortune. Will never buy from this company again.

I’m not happy with Siri. The last 2 things I’ve searched for, she’s been utterly useless (kind of like a real woman). However, I click over to the Google ap, use the “speak” function on it and it’s gotten me what I wanted with no issues.

Siri was pretty useless at launch (especially with my New Zealand accent) but it’s been fine for a long time. I haven’t had her mishear me for ages.

Google Search is not “noticeably better”. Do you guys even use Siri vs. Google Now? I do every day and what you say is not true. BTW: Ever try to use Google Now from a locked Android screen with anything other than a Moto X?

Ever try and send a text message with Google Now? What about the speech output back to you when hands free?

3+ Billion for a marketer of crappy headphones and a me too music service but no cash to buy Nuance? I really wonder what is going on in Tim Cook’s head.

Nuance is being shopped and Samsung is sniffing around. Nuance holds a truckload of patents related to speech recognition and it would doubtlessly be far cheaper to buy Nuance than to spend a fortune skirting their patents.

Apple will do what Google did, by creating there own, and will have a better service for it too. There off to a good start. They now own a company that’s done great things with speech recognition (Novaris) and the guy that created Dragon dictation and sold it to Nuance, Dr. James Baker. Google did something similar when they acquired Nuance’s co-founder Mike Cohen. Apple has even been creating a neural network for Siri. This makes Nuance a horrible acquisition.

I am happy for any technology improvements, buy I really don’t identify with articles like this. I have used Siri since it was introduced. I rarely type text messages any more, and frequently use Siri to dictate emails. I use Siri for reminders, setting timers and alarms, quick searches, and occasionally launching apps. I easily get 99% speech recognition from Siri, even with moderate ambient noise. Maybe more people should ask Siri to look up the definition of the word “diction”.