The LiDAR Scanner in the iPhone 12 Pro makes Apple’s new flagship device an augmented reality powerhouse. It enables the handset to make an accurate 3D map of its location into which virtual objects can then be placed.

However, the scanner apparently has been added to the iPhone 12 Pro models in a “build it and they will come” strategy. Apple didn’t show many specific applications demonstrating the benefits of the tech during Tuesday’s launch event for the device.

What the heck is LiDAR?

“Lidar stands for light detection and ranging, and it measures how long it takes light to reach an object and reflect back,” said Francesca Sweet, Apple’s iPhone product line manager. “We’ve adopted this technology for iPhone, and with the machine learning and depth frameworks of iOS 14, iPhone understands the world around you, and builds a precise depth map of the scene.”

Apple’s version of this time-of-flight sensor has a range of 16 feet. It works indoors and outside, and creates maps nearly instantly.

ToF technology gets used in terrain mapping (including on Mars probes). And lidar scanners let self-driving cars sense objects around them. Cupertino first added a LiDAR Scanner to the 2020 iPad Pro and now the iPhone 12 Pro.

Apple embraces augmented reality

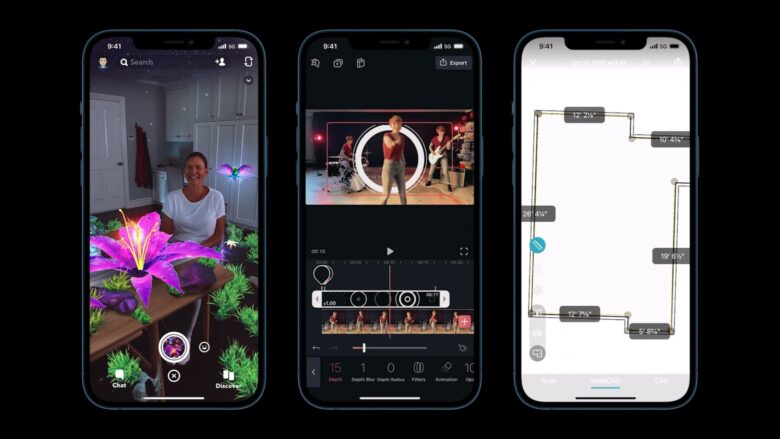

Apple built lidar into the iPhone 12 Pro models explicitly for augmented reality applications. AR overlays computer-generated images onto the real world, and therefore needs to know exactly where real-world objects are.

“Lidar makes iPhone 12 Pro a powerful device for delivering instant AR and unlocking endless opportunities and apps,” noted Sweet. But Apple didn’t get more specific at Tuesday’s event. Previous unconfirmed reports indicated that Apple is working on a shopping-oriented AR application that would be built into iOS 14. If so, Apple kept it a secret at the iPhone 12 launch.

Games are the most obvious use of AR. Everyone should remember the Pokémon Go craze of a few years ago. The LiDAR Scanner in Apple’s newest handset would have made that experience considerably better.

Among the biggest benefits of this 3D-mapping scanner is occlusion detection. With an accurate sense of where all the real objects are in a space, an AR app can show virtual objects moving around physical ones, including going behind them.

Photo: Apple

Lidar is about more than AR

While Apple emphasized the augmented reality uses of the iPhone 12 Pro LiDAR Scanner, that’s not the only use for it. The sensor boosts camera performance, speeding up focusing in low-light photography by up to 6x.

Cupertino also brought up room scanning, including a real-world example. A video demo showed Jig Workshop Pro using an iPhone 12 Pro’s LiDAR Scanner to capture all the contours of a workspace. With AR, the application can be used to lay out a production facility using 3D models of machines and workstations.

Preparing for the future

With rare exceptions like Pokémon Go, augmented reality hasn’t caught on. But Apple seems to be reasoning that what’s needed is for phones to be better at scanning the spaces around us, leading to more realistic AR apps. Hence the iPhone 12 Pro.

The goal is apparently to break AR out of a “chicken or egg” scenario. Before now, there’d been little point in developing advanced AR applications because there were no phones that could take advantage of them. That’s no longer true, so Apple now must hope that developers will get to work creating powerful AR apps that justify including a LiDAR Scanner in the flagship iOS devices.

It might already be happening. Snapchat on Wednesday unveiled a Lens Studio update that takes advantaged of the 3D scanner.