Coming to iPhone and Mac is a tool that examines images looking for cats and dogs. But the goal isn’t an app that allows people to walk around with an iPhone identifying the species of random critters. As fun as that might be, Apple is using machine learning to provide developers a powerful tool for identifying object of any type in images.

VNAnimalDetector is probably the most fun part of Apple’s Vision Framework, as it lets developers add that cat or dog detector to their applications in just a few lines of code.

Intro to Visual Framework for non-programmers.

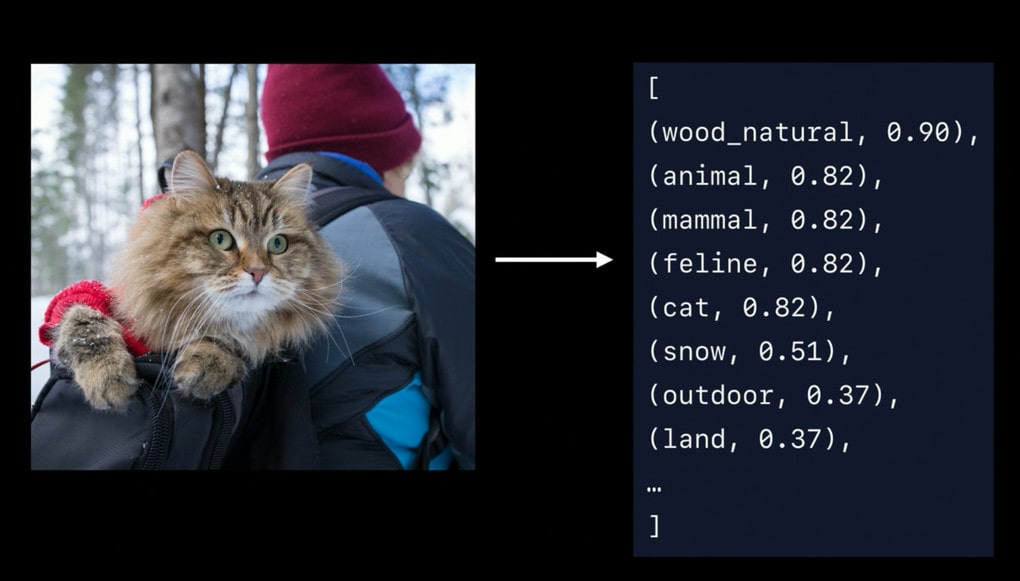

But that’s barely the tip of the iceberg. As shown in the example above, Vision Framework can tell that a cat is out in some snowy woods. This feature can be built into an iOS, macOS or iPadOS application very easily because Apple has done all the hard work.

Apple’s goal seems to be to assist developers in creating software that will help all of us sort through the huge collections of images we’re all generating. Just as one example, Vision makes it a snap for an application to tell whether a picture includes a cat and bowl so an app could easily enable someone find a specific picture they took of Fluffy 2 years ago.

And that includes facial recognition. That’s not the type enabled by the 3D sensors built into recent iPhone and iPad models; that’s something easier. Apple’s Vision system is making advances in being able to identify individuals in regular “flat” images.

More information is available in a “Understanding Images in Vision Framework” presentation from WWDC 2019.