For the last thirty five years, time after time, Apple has revolutionized the way we look at technology and dragged the rest of the industry kicking and screaming into the future. If we listed all the ways in which Apple has changed the way we interact with technology, we could fill a book, so here are some of our favorite examples of how Apple has led the tech industry every step of the way.

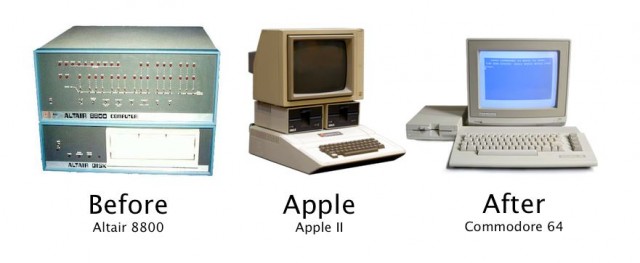

Apple didn’t design the first consumer PC: that was the Altair 8800, a computer that was sold as a DIY kit in the back of Popular Electronics magazine in 1975. Apple’s first computer, the Apple I, tried to mimic the Altair 8800’s DIYer success, but it wasn’t until 1979’s Apple II that Steve Jobs and Steve Wozniak truly revolutionized the world of home computing by releasing the world’s first consumer PC designed for amateurs instead of hobbyists and engineers: a device that resembled a home appliance and came pre-assembled and working out of the box. Less than a year later, the competition was already catching up, with Commodore releasing the popular (and Apple II-like) VIC-20 in 1980 and following it up with the Commodore 64 in 1982. To this day, the impact of the Apple II is felt… while a few holdouts still build their machines from scratch, the computer industry is dominated by consumer-oriented, prebuilt machines.

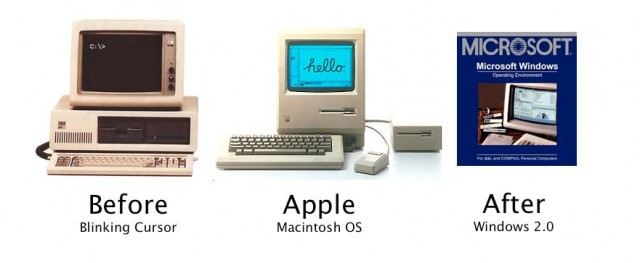

These days, we take the pretty graphical user interfaces of OS X and Windows for granted, but getting there wasn’t intuitive. The blinking cursor of operating systems like DOS is the most obvious interface for a computer, because what could be more intuitive than telling a computer what you want it to do in English?

The only problem is that computers weren’t smart enough to understand English and to work out what users wanted them to do, so computers had to have their own language and syntax to accept commands… and what had started out as an intuitive idea became a very high barrier to entry to most amateurs.

With Macintosh OS, Apple changed all of that by using visual metaphors to imagine a virtual space, with files as real objects that you can open to manipulate their contents, and a mouse pointer working like a finger tip tracing along a desktop.

Apple’s desktop model was such an amazing success that the graphical user interface has been the default way we interact with our computers and gadgets ever since. First released in 1984, Macintosh OS was followed almost immediately by the first version of Windows just a year later. Sure, any one on a Mac can still drop to a Unix shell to input commands if they want to… but even then, they have to go through OS X’s GUI first.

The shift from compartmentalized machines to all-in-ones isn’t to everyone’s liking. It makes PCs harder — if not impossible — to upgrade, and it means if one component breaks — a graphic card, or the display — the whole thing’s got to be hauled in to repair.

But there are a few big advantages to all-in-one PCs. First of all, they are easy to set up. Second, they can be designed to be more consistently attractive than traditional tower PCs. Finally, because the manufacturer has control over every component going into their PCs, well, “they just work.”

Guess which company loves to make products that just work? Yup: Apple, who revolutionized the all-in-one PC with the 1998 iMac G3.

Tower PCs haven’t gone anywhere — Apple still makes one with the Mac Pro — but they’ve become increasingly niche since the iMac’s debut. While professionals and tech heads might want a machine they can build themselves and replace components easily in, most consumers just want something that is fast, looks good in their home or office and works without any problems. No wonder towers are increasingly being pushed to the sidelines.

![Before / Apple / After: How Apple Has Led The Tech Industry Every Step Of The Way [Gallery] Creative_Wallpaper_Apple_Viva_la_Revolution_018884_](https://www.cultofmac.com/wp-content/uploads/2011/09/Creative_Wallpaper_Apple_Viva_la_Revolution_018884_.jpg)