When Apple introduced ARKit in 2017, the augmented reality platform was hailed as a game-changer. Two years later, Apple’s AR push looks ready to deliver the type of experience that gets CEO Tim Cook so excited he wants to scream.

Thanks to a trio of new augmented reality tools for iOS 13, and the very real possibility of an Apple AR headset on the horizon, 2019 promises to be the start of something truly special for Apple’s augmented reality efforts.

“I personally look at AR as in a similar spot to the internet in the late ’90s,” Andrew Hart, founder of AR mapping company Dent Reality, told Cult of Mac. “Large players clearly understood the potential, and got busy hyping it to consumers. But they hadn’t quite figured out the user interface or the use cases. It started with people mostly building and designing their own novel websites, with flashy graphics and animations not previously possible. Once we learnt what those use cases were, the novelty aspects went away and the internet became genuinely useful.”

With thousands of ARKit-enabled apps in the App Store now, most big players are games like AR Dragon, The Machines or AR Smash Tanks!

Augmented reality: A world beyond games

But while AR games dominate the category, other demos are starting to emerge that showcase applications beyond gaming. Earlier this year, WWDC Scholar and Georgia Tech student Nicholas Grana showed off an ARKit iMac prototype. Simply scanning a keyboard magics up a virtual iMac screen for users to explore. While only a demo, it underlines just how powerful AR can be.

iMac in AR! Just scan a keyboard and interact with an iMac as if it is really there. ???

Played with #ARKit for a few hours, and ended up with a really cool prototype. Maybe desktops can be replaced by AR? Built with #ARKit on #iOS #iOS12 pic.twitter.com/iPAu7Wb01P

— Nicholas Grana (@nicholasgranaa) May 19, 2019

Similarly, mapping apps use AR to place contextual information onto real-world scenes. And that’s just the beginning. Consider, for instance, using ARKit to show visitors around an Airbnb rental. Or using AR in sportswear like swim goggles.

With Apple devices growing ever more powerful, Apple software is paving the way for an AR revolution.

New use cases for augmented reality

Some of these types of AR tools already found their way into Apple’s software suite. I regularly use the Measure app baked into iOS 12 for checking distances.

In iOS 13, which should launch this month as new iPhones arrive, Apple uses ARKit to solve one of the most annoying problems with FaceTime. Using AR sleight of hand, Apple digitally adjusts users’ eye lines in real time. That means they can peer at their screens and still appear to be looking straight into the camera lens. Solving the FaceTime eye line problem is a small flourish in theory, but it’s a big one when it comes to making communication more natural.

Underlining the power of AR, Apple recently started hosting AR walks at its biggest retail stores. Like a virtual art gallery, these AR[T] walks show off augmented reality’s potential via amazing demos created by top artists.

Compared to previous Apple time lines, we should be sitting on the cusp of an AR revolution, says Taylor Freeman, CEO of Axon Park.

“It really took about three years for the iPhone to get decent penetration,” said Taylor Freeman, CEO of virtual reality education company Axon Park. “With that as a comparable, ARKit came out in 2017, so if we don’t see a ‘killer app’ by mid-2020, then we will have reasons to worry.”

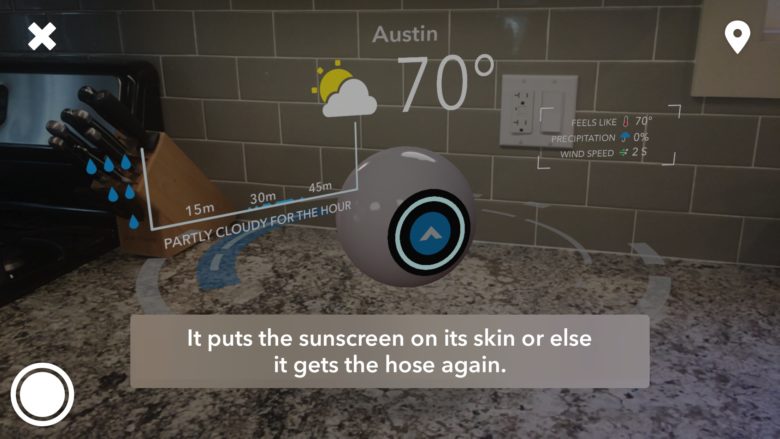

Photo: Brian Mueller/CARROT

AR is Tim Cook’s baby

It’s no secret that ARKit is Apple CEO Tim Cook’s baby. Just as Steve Jobs once gushed over the Mac as a “bicycle for the mind” and iTunes as a “music revolution,” Cook talks about augmented reality as a true tech paradigm shifter.

“We have the world’s largest augmented-reality-enabled platform, and thousands of ARKit-enabled applications in the App Store,” Cook said during Apple’s Q3 2019 earnings call. During the presentation to Wall Street investors and analysts this summer, Cook painted AR as an area ripe for growth.

“Building on this strategy, and our momentum in this area, we introduced three new AR-based technologies,” he said. “Our developers are already running with these new technologies and we think our customers are going to love some of the apps these creators have in store in the months ahead.”

The three planks of the Apple AR platform are ARKit, RealityKit and Reality Composer. Coupled with the advanced hardware in the latest Apple devices, they stand ready to spark an AR explosion.

Showing off the potential of AR

At this year’s Worldwide Developers Conference, Craig Federighi, Apple’s senior vice president of software engineering, showcased the company’s burgeoning augmented reality platform. Onstage in front of developers and reporters, he focused on AR improvements that power motion capture and “people occlusion,” which lets apps place live humans into augmented reality scenes.

“Now this is insane,” Federighi said, as a woman walked through a room filled with oversize, animated toys. “What used to require painstaking compositing by hand can now be done in real time. Now, by knowing where these people are in the scenes, you can see we can layer virtual content in front and behind them.”

Real-time motion capture

Then he showed off the motion-capture capabilities that doubtless will fuel millions of Andy Serkis-style interactions.

“And check this out: motion capture!” Federighi said as a life-size virtual artist’s articulated mannequin seamlessly mimicked the motions of the man beside it. “Just point your camera at a person, and we can track in real time the positions of their head, their torso and their limbs, and feed it as an input into the AR experience. Now, developers are going to do amazing things with ARKit 3.”

(You can see Federighi’s AR showcase, including the demos that drew applause, in the video below.)

Apple augmented reality tools

ARKit 3

Onstage at WWDC 2019, Federighi called ARKit 3, the third iteration of Apple’s augmented reality framework, a “major update.” Among other things, it uses on-device, real-time machine learning to recognize the human form and seamlessly integrate people into AR experiences.

“The new ‘people occlusion’ feature in ARKit is really freaking cool,” Brian Mueller, founder of Grailr and creator of the snarky Carrot series of apps, told Cult of Mac. “It recognizes real people are present in the video stream coming in from the device’s camera. [It then] allows AR objects to appear to ‘go behind’ those people, which greatly increases the immersion factor. It’s just insane that Apple is able to do this amount of processing of the imagery coming in from the camera completely live, as it happens.”

RealityKit

The second piece of the Apple AR puzzle is the new developer framework, RealityKit. This framework makes it possible to implement high-performance 3D simulation and rendering in AR apps. It enables the creation of more-compelling experiences by letting developers import detailed assets and audio sources in their AR worlds. Using RealityKit, animated objects can respond to changes in the environment.

“RealityKit was a technical restart of AR for Apple,” Dent Reality’s Hart said. “They originally built AR on top of SceneKit, their existing 3D rendering engine, but with RealityKit they started from the ground up for their rendering and interaction technologies. This gives them performance benefits, and allows them to make it simpler for regular developers to build for AR.”

Reality Composer

The third piece of Apple’s AR software initiative is Reality Composer, which makes it easier to create AR content for people with limited or no 3D experience.

Apple is “making it much easier for developers to implement AR tech into their apps,” Grailr’s Mueller said. “RealityKit makes it super-easy to import 3D assets and play around with properties and animations.”

Photo: Apple

Consistency of experience in Apple augmented reality

Together, these tools should make it easier for developers to incorporate AR into their apps. There are some limitations, however. Features like people occlusion and multiple face tracking are only supported on high-end devices like the iPhone XS, powered by Apple’s A12/A12X Bionic chips and TrueDepth Camera. This means that many of the top AR apps will not work on older iPhones.

Hart also noted that there is a problem with consistency when it comes to AR apps. This could become more troublesome as AR takes off. Lower barriers to entry (thanks to Apple’s beefed-up AR tools for devs) should mean more AR apps in the App Store. But being able to run them on only a limited subset of devices obviously would limit the growth of AR in the Apple ecosystem.

Certain Apple technologies, like the MacBook’s Touch Bar or 3D Touch on the iPhone, never truly became universally useful. Small, dedicated groups might love them, but inconsistent implementation means users don’t know what to expect from their devices. Apple could do more to help developers to create consistent AR experiences.

“One thing that’s currently missing is material examples around user experience,” Hart said. “AR is a brand-new interface paradigm, just like a GUI or a touchscreen. But there’s currently no frameworks for building really compelling AR experiences. Apple do have their AR Human Interface Guidelines, which touch upon some high-level ideas on how to present a compelling experience, such as ensuring text scales based on the user’s distance, to always appear at a consistent size. But there’s no technical framework which implements this — every developer needs to experiment with the 3D engine and develop these common experience features themselves. This is something I’d like to see Apple take a lead on — and expect they will with RealityKit 2.0.”

The future is Apple AR glasses

Photo: Lewis Wallace/Cult of Mac

One thing that everyone agrees on is that Apple is just getting started with augmented reality.

“I have to see this ground-up restart, only two years into the platform, as a rededication of Apple’s commitment to AR and their future AR-based products,” Hart said. “A focus on performance obviously enables them to move towards a lightweight AR wearable product.”

This AR headset project is ultimately where users will see the true value of ARKit.

“Apple’s current work in the AR space is laying the foundation for a head-mounted display future,” Axon Park’s Freeman said. “There is certainly a lot of utility and value that can be created using flat-screen AR. But the real magic will happen when everything gets moved over to glasses.”

When will Apple AR glasses arrive?

We still don’t know when, or if, Apple’s AR glasses will become a reality. However, they could be closer than you think. In fact, assets recently popped up in iOS 13 betas that appear to reference an AR headset of some sort.

Will we get our first glimpse of an Apple headset next week at the iPhone 11 event?

“Who knows how far off [an Apple AR headset] is, but the benefit is obvious,” said Grailr’s Mueller. “Not only would AR elements be laid over what we’re actually seeing as opposed to having to view them through a phone’s screen, but the AR will also be a lot more persistent. Instead of having to launch apps, wait for them to recognize the scene around them, then add their AR elements into it, the AR elements will just always be there, laid over what we’re seeing.”

So far, nobody has been able to pull off smart glasses effectively. Google Glass became a notorious flop. Snap’s new Spectacles 3, seemingly built with AR in mind, don’t look much better. But Apple’s working to change things. The top-secret group developing Cupertino’s AR glasses recently got a new leader. Kim Vorrath is reportedly charged with “bringing order to the AR headset team.”

With fiercer, more focused leadership, Apple is surely working to make its AR headset a reality. According to analyst Ming-Chi Kuo, an Apple-branded pair of AR glasses could launch as soon as 2020.

By putting powerful software tools in the hands of developers, and powerful devices in users’ hands (and likely on our faces), Apple is laying the necessary foundation that will make augmented reality a force to be reckoned with.

Keep watching this space.