A tiny, low-power auxiliary processor that constantly listens for the phrase “Hey Siri” enables one of the most basic features of Apple’s AI assistant.

The processor, embedded in the iPhone’s motion coprocessor, keeps the “Hey Siri” command from running on the device’s main processor all day. That revelation comes in a research paper published today by Apple’s machine learning team. The paper dives deep into how Apple uses AI to power “Hey Siri.”

Siri, the AI assistant that occupies an increasingly prominent place in the Apple ecosystem, debuted in 2011 on the iPhone 4s. Today, Siri works on Macs, iPads, HomePods and more. It allows users to quickly perform routine tasks and get answers to common questions.

However, while Siri continues to improve, the feature still needs work. And Apple faces increasing competition from smarter services like Google Assistant.

Apple’s data scientists keep plugging away, making Siri smarter and more useful all the time. In the paper published on its blog today, Apple discusses how it found unique ways to use machine learning to prevent false triggers of the “Hey Siri” command.

How ‘Hey Siri’ works

Photo: Apple

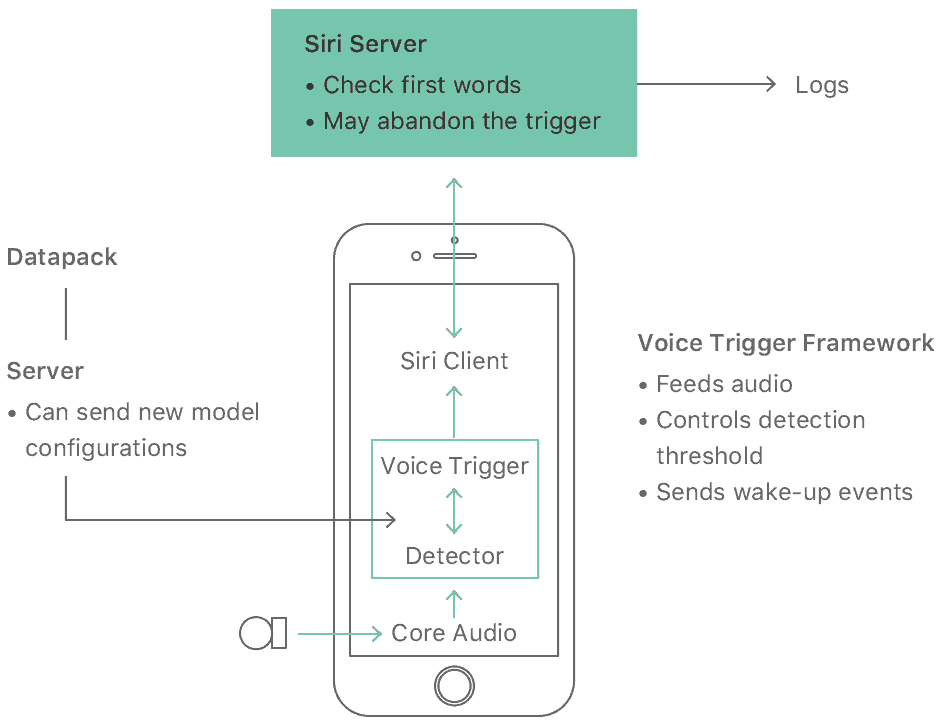

Detecting when someone actually wants to trigger Siri proves more complicated than you might expect. To pull it off, Apple converts your voice using a Deep Neural Network into a probability distribution over speech sounds. That, in turn, generates a confidence score. If it’s high enough, Siri wakes up.

Apple also utilizes a score with a lower threshold. If the confidence score meets that but doesn’t exceed the upper threshold, your iPhone’s processor enters a more sensitive state for a few seconds. That means it can activate Siri more quickly if you repeat the command.

Making Siri more accurate

To further increase Siri accuracy, Apple created language-specific phonetic specifications of the “Hey Siri” phrase for its model. In English, the company uses two variants. In one, the first vowel in “Siri” sounds like “serious.” In the other, it sounds like “Syria.”

If you’re interested in machine learning and want to know about how Cupertino uses it in speech recognition, give Apple’s full paper a read.