Damon Rose is 46, and has been blind since he was a teenager. In 2012, the iPhone changed his life.

Damon Rose is 46, and has been blind since he was a teenager. In 2012, the iPhone changed his life.

Rose, a senior broadcast journalist at the BBC, uses GPS to get around unfamiliar areas, with an earbud stuck in one ear, and uses a third-party app that tells him what shops he’s walking past. It’s “amazingly helpful,” he told Cult of Mac. “I can look at menus on restaurant websites while I’m sitting there with my first drink of the evening,” instead of having the waiter read out the menu.

The iPhone might not have been the first phone with accessibility features, but it was certainly the first popular pocket computer to be easily useable by the blind and the hearing-impaired.

“The blind community loves Apple because it was pretty much the first to embrace full-on access,” Rose said. “Microsoft had a feeble screenreader built into it, but it just read the odd bit [of text] off the screen.”

Today, iOS is pretty much the default operating system for anyone who has trouble reading the screen or hearing. Partly that comes down to ubiquity — there are around a billion iOS devices out there — but it’s also because Apple bakes accessibility into the essence of the iPhone and iPad, instead of adding it on top like frosting, the way others do.

“Google is trying,” Donal Fitzpatrick, School of Computing, Dublin City University, told Cult of Mac, “though in my view their stuff still has more holes than Swiss cheese.”

Screenshot: Cult of Mac

2009: Apple reinvents accessibility

Apple added its first accessibility feature in iOS 3 (then still named “iPhone OS 3”). The iPhone 3GS was a landmark for all users because it finally added copy-and-paste functionality, but it also added VoiceOver, a technology borrowed from the Mac and — somewhat improbably — also found on the iPod shuffle.

“Sighted people touch objects on the screen and make things happen,” said Rose. “Blind people have to trail their finger around to generate noise and then double-tap to make the action happen.”

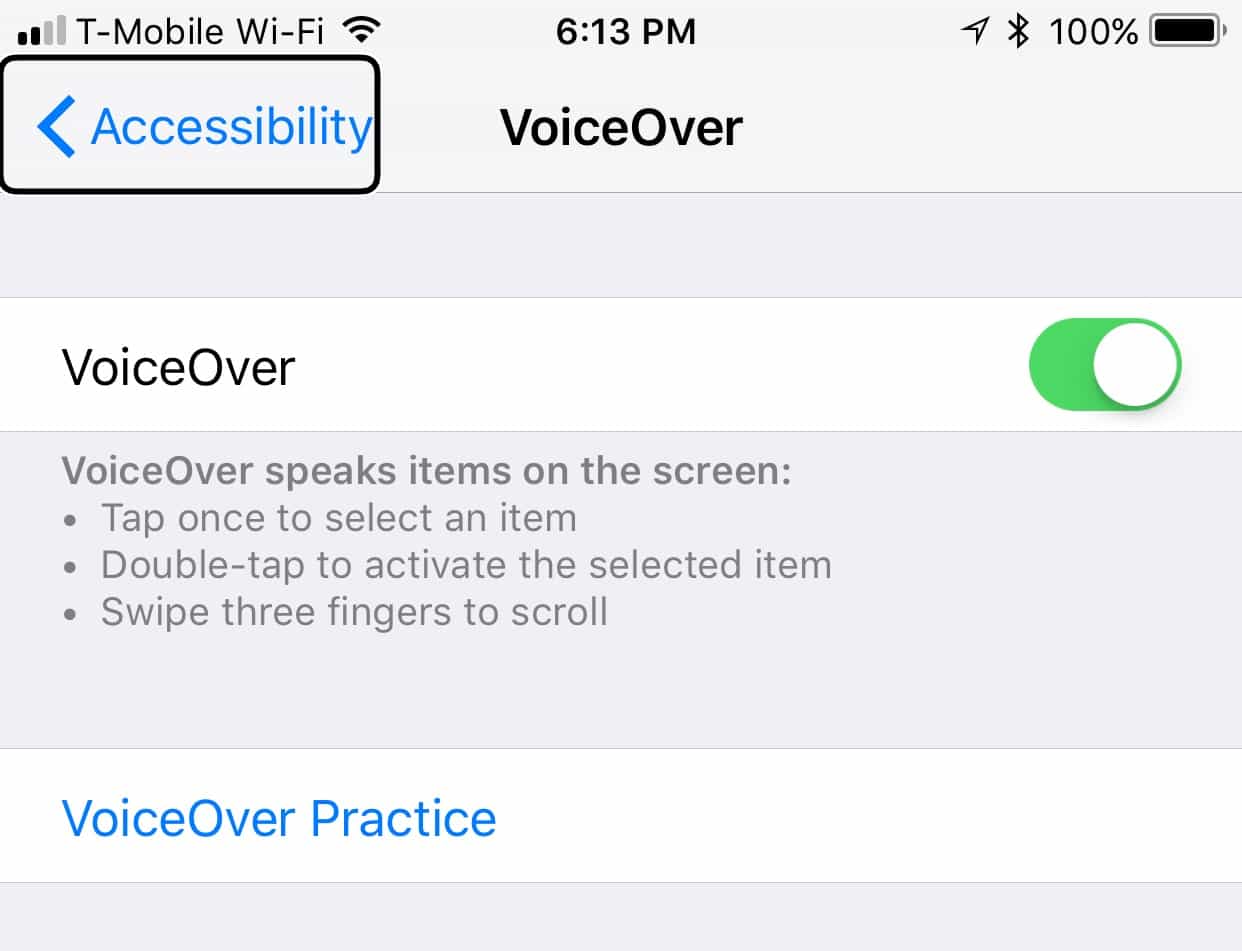

If you’ve never used VoiceOver, you can try it right now. It’s already on your iPhone — a fact that makes all the difference.

“I had to spend 200 quid on a Hawking-esque screenreader for [my Nokia handset] called TALKS,” said Rose, “whereas VoiceOver is built in and free as all accessibility tools should be.”

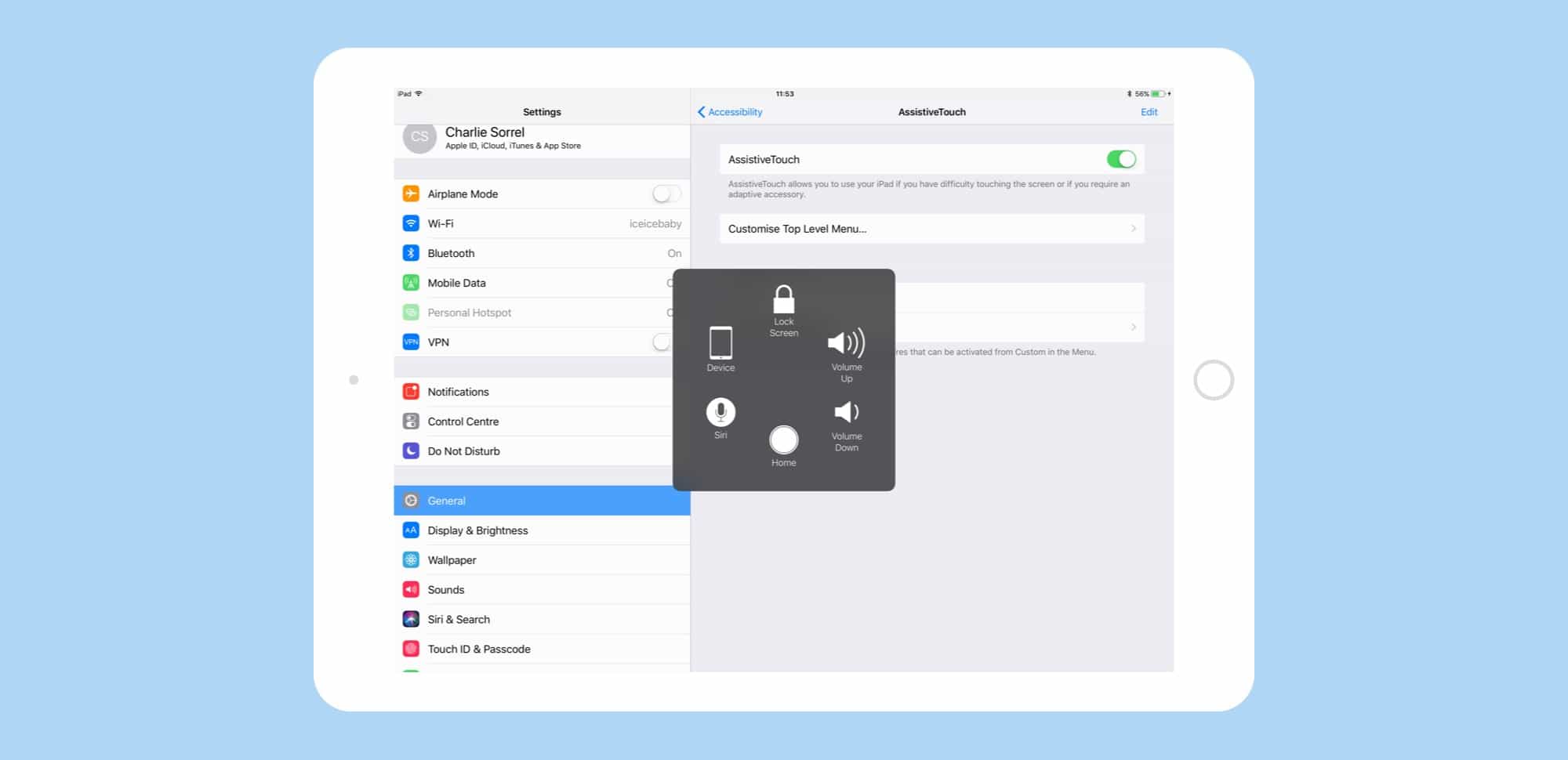

Screenshot: Apple

VoiceOver can be toggled on in Settings>General>Accessibility>VoiceOver. Be careful, though, because it can be quite a shock. As you run your finger over the screen, your iPhone will now talk to you double-quick, telling you what you’re touching. It’s overwhelming at first, but you quickly get used to it, in the same way we all filter out the ads and other visual noise that crowd every web page.

Once you’ve found what you’re after, you double-tap it, and the button is pressed. Text is read aloud. It’s possible to speed through iOS in this way, and there are some amazing features that are quite unexpected to a sighted user.

Accessibility is baked in to iOS

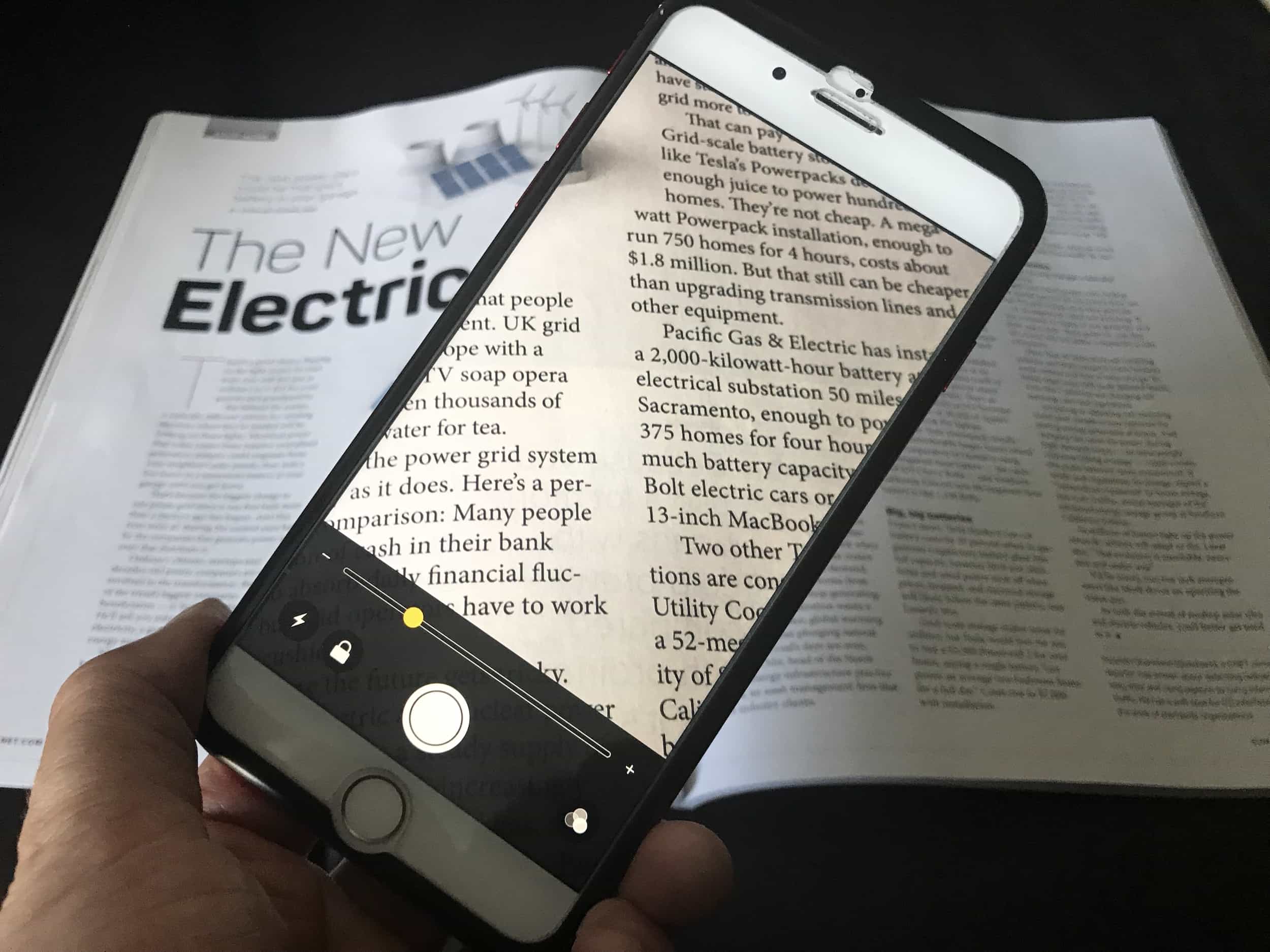

For instance, when typing, you can have your iPhone call out each letter as you type, or just the words. When taking a photo, the iPhone uses the same face-detection that the camera uses to focus and recognize smiles to tell you how many faces are on the screen (and where they are). You can compose a photo with the subject nicely off-center, and in focus, all without seeing the screen.

There’s another big advantage to VoiceOver, too — you can use it with the screen’s backlight switched off, which means your iPhone can go for days without charging.

Following that first venture into VoiceOver, Apple added simple things like closed-caption support for subtitles, special high-contrast views to make reading easier, deep hooks to connect hearing aids and other accessibility devices to the iPad and iPhone, and things like the handy triple-tap Magnifier that uses the iPhone’s camera to make the real world bigger.

This last feature, the iOS Magnifier, shows why accessibility is a such a big deal. It’s not a case of spending a huge amount of development time just to help “other” people. Accessibility needs fall on a scale. Some folks might be totally blind, but many more of us wear glasses. And with the world’s population aging, making text larger will never not be appreciated.

A slow start for iPhone accessibility

At first, use of iOS accessibility features was patchy.

“The fact [the iPhone] had a flat, featureless touchscreen made me and all other blind people believe that we’d never ever be able to use it,” said Rose. “Things have to be tactile, like Braille, for us to use — right?”

Indeed, Rose found that VoiceOver had such a steep learning curve that he gave his iPhone to his then-girlfriend (now his wife, and went back to his Nokia. (Like Rose, Fitzpatrick also used TALKS on a Nokia Symbian phone.)

However, like anything Apple truly cares about, VoiceOver improved, year after year.

“By 2012, I had been persuaded by all my blind pals that the iPhone was now considerably faster, making VoiceOver work better and be more intuitive,” said Rose.

The iPhone in 2017 is now a powerful accessibility tool. Fitzpatrick uses it for navigation, keeping up on football scores and all the usual stuff. But he also uses his iPad for coding and as a part of his job as a computing lecturer at Dublin City University.

That’s just the beginning. Because accessibility is so easy for developers to add to their apps (basic implementation is as simple as adding captions to buttons for VoiceOver to read out loud), all kinds of useful apps have been built.

“There are apps which will tell you what color your clothes are when you drag them from your wardrobe in the mornings,” said Rose. “In the future I gather that gas and electricity smart meters will throw their results onto your smartphone, too, and there must be ways of interfacing with ATMs, ticket machines, etc.”

By hooking into other gadgets, the iPhone can bring its comprehensive accessibility features to the outside world. The Apple Watch will soon talk to gym equipment, for example, which opens the way for more accessible gyms.

Apple’s accessible future

Fitzpatrick would like to see more of these kinds of connections, and sees the Apple Watch as a conduit. For instance, “a blood sugar monitor, which can read your blood through the wrist strap in the watch, could help an awful lot of blind people because many of the sugar testing machines are not accessible,” he said. “Blind people with diabetes would massively benefit from this.”

And Apple Watch’s upcoming integration with glucose monitors could even help to prevent blindness in the first place.

“Sighted people may not go blind if they have an always-on sugar monitor on their wrist,” said Fitzpatrick, since diabetes is a big cause of blindness.

Meanwhile, Apple continues to improve accessibility on the iPhone. iOS 11 adds VoiceOver to drag-and-drop and introduces Type to Siri (meaning Apple’s AI assistant can be accessed without speech). It can even detect text in photographs and read it out.

That means Rose won’t even need to search for restaurant menus online anymore, only to be shunted off to a Facebook page or forced to download a PDF. Instead, he will be able to snap a photo of the day’s specials, and have Siri read it out to him.