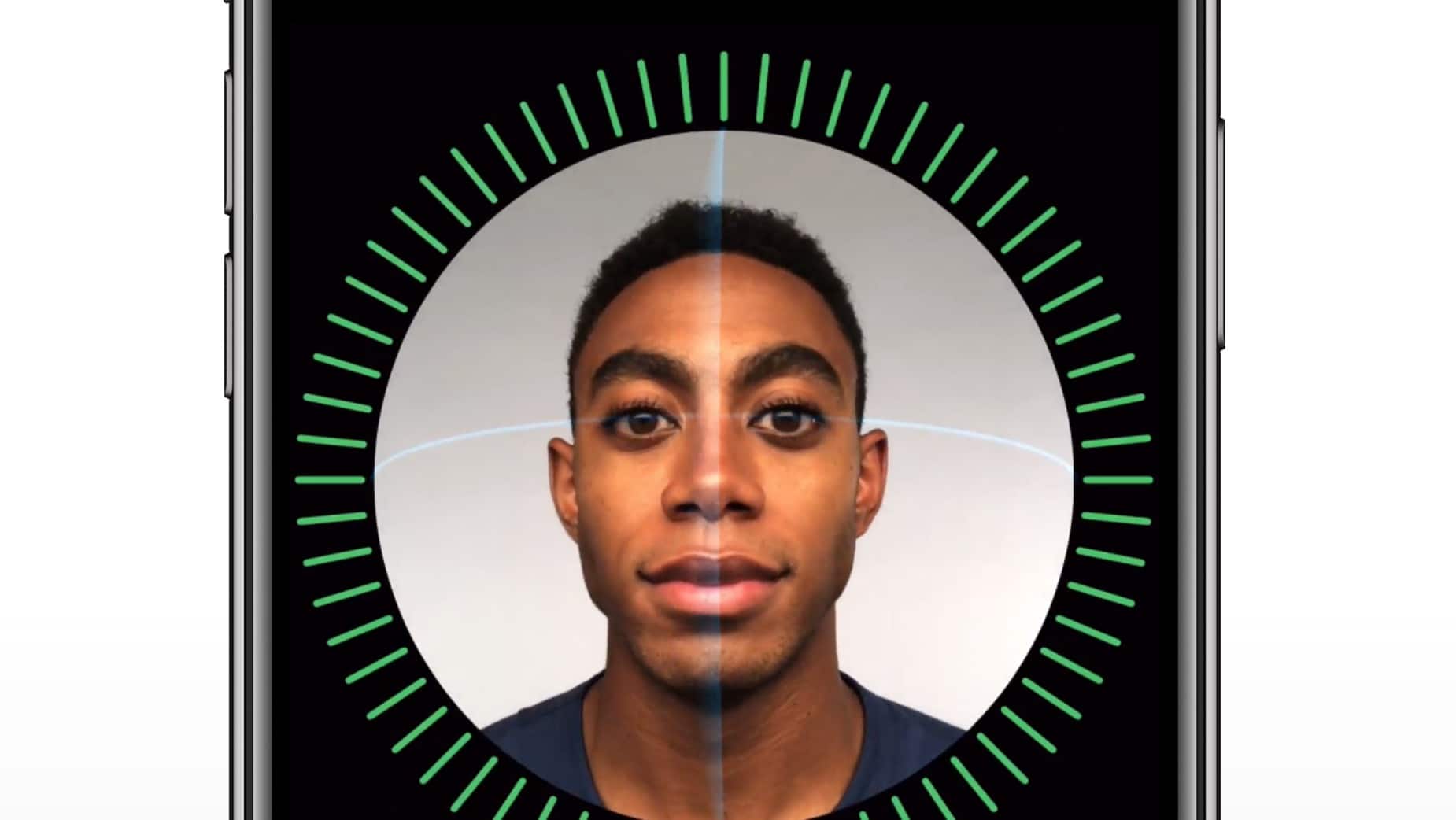

Apple says it has done extensive testing to ensure that Face ID treats everyone equally when the feature launches next month with the iPhone X.

Face ID has attracted a slew of security questions from the public wondering how Apple plans to keep biometric data private. U.S. Sen. Al Franken also asked what Apple is doing to protect against racial, gender or age bias in Face ID.

Apple finally responded to the senator’s question, providing a deeper look into the testing process.

To make Face ID incredibly accurate, Apple worked with diverse people around the world during testing. Cynthia Hogan, Apple’s vice president of public policy for the Americas, released the following statement today:

“The accessibility of the product to people of diverse races and ethnicities was very important to us. Face ID uses facial matching neural networks that we developed using over a billion images, including IR and depth images collected in studies conducted with the participants’ informed consent. We worked with participants from around the world to include a representative group of people accounting for gender, age, ethnicity, and other factors. We augmented the studies as needed to provide a high degree of accuracy for a diverse range of users. In addition, a neural network that is trained to spot and resist spoofing defends against attempts to unlock your phone with photos or masks.”

Apple’s Face ID feature will get its first big test on November 3, when millions of customers finally get it into their hands. The security feature for unlocking the iPhone X is powered by True Depth cameras that can detect a person’s face even in the dark.

Face ID replaces the traditional Home button and Touch ID on the high-end iPhone X. A recent rumor claimed that Face ID could make its way to all iPhones next year.

9 responses to “Apple explains how it tried to prevent Face ID from being racist”

“Apple’s Face ID feature will get its first big test on November 3, when millions of customers finally get it into their hands.” Don’t you mean thousands, not millions? Rumor has it that Foxconn has just sent Apple a shipment of 46,000 devices. Going off of that data, it seems likely that there will only be about 50k – 100k iPhone X’s available (including both in-store purchases and online shipments). The real test of FaceID will occur sometime next year when the device finally becomes available in greater quantities.

yeah i’ve repeatedly heard rumors of shipment delays and production issues etc…

It’s called “artificial shortage”. We seem to hear that every year with the hottest and latest tech and toys.

Really…this is a concern…dont you think that Apple is probably doing what is can to prevent this sort of stuff? Common…report something worth while…

Al Franken is an idiot who will play the race card at every opportunity.

Doesn’t FaceID use infrared? Skin color shouldn’t matter much.

Ugh!

PC out of control

There is a history of problems with face recognition of minorities- with people being unable to get into their place of work, or being stuck somewhere in a building, because of this very issue,

https://gizmodo.com/why-cant-this-soap-dispenser-identify-dark-skin-1797931773

Al Franken wasting everyone’s time again.