Future iPhone software and cameras could support sign language recognition, alongside a range of other in-air interface gestures, according to a patent application published today.

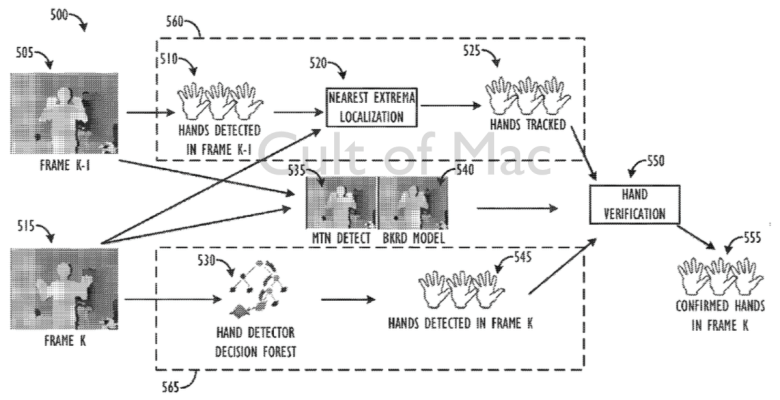

Titled “Three-Dimensional Hand Tracking Using Depth Sequences,” Apple’s patent application describes how devices would be able to locate and follow the location of hands through three-dimensional space in video streams, similar to the face-tracking technology Apple already employs for its Photo Booth app.

Photo: USPTO/Apple

There are plenty of possibilities for this kind of technology, ranging from the aforementioned ASL sign language support to pose and gesture detections for adding additional interface controls to iOS devices.

Apple has been working on this area for a while now, with its 3D head tracking patents first coming to light more than half a decade ago in 2009. They took a step forward when Apple acquired PrimeSense — the Israel-based company behind the 3D motion tracking in the original Xbox Kinect, in late 2013.

Patent applications don’t always guarantee that an actual product will end up shipping, of course, but it’s great to see evidence that Apple’s continuing to focus on new ways to push its current touch interface even further.

What possible applications could you envisage for Apple’s hand-tracking patent? Leave your comments below.

Source: USPTO

Via: Patently Apple

3 responses to “Your next iPhone may be able to read sign language”

Good luck with that.

Hearing people often think that signlanguage is just a “bit of winking around with hands”…

I am educated in Swedish Sign Language – which shares a lot with ASL. We have even imported words from ASL. Most Swedish deaf people know

a little ASL. Sign Language varies, it is it’s OWN language, standing on it’s own legs. Own grammar.

The Difference between Swedish and Swedish S L is like the difference between Swedish and Chinese!

I think it’s the same with American/English and ASL……

To further make it harder; Own grammar, own syntax and one can easily sign multiple “words” at the same time!

Then you have mixes. Anything between people who are born deaf which uses a very “deaf” sign language up to people

who have lost their hearing as adults (they follow the English/Swedish grammar, syntax etc).

You also have Sign-As-support (literally translated from Sw, dont know the English term), a “tinyversion” of S L used

with people who has other disabilities upon their hearing problem….Could be peole with for example a brain damage etc,

…and you have a special developed version of S L for people who are deaf-Blind, a tactile version…

They hold their hands over yours and you have to adjust some signing….It is really a experience….

… and to make the programmers go totally bonkers; you can express whole sentences in the blink of an eye

“Yes, I have seen him, I guess he is still around…” is expressed with both hands, a nod, eyebrows, mouth – AT THE SAME TIME..

… and to confuse things, many signs are different depending on where you grew up (dialects). I for example

know five different signs for the noun “Orange” (the fruit)….three for “Tree”, five for “Doll” and probably the same for

“Coffeemaker”, “Wind” etc …And by just watching sb signing you soon get which boarding-school the grew up in….

…Moderation is also expressed with your whole body, your face etc. There is a difference betwwen the

pretty neutral signing of “I am bored” and “I am so bored I feel that I can’t take another minute of this shit”.

Same signing – but the intensity, mimics, direction of body etc is different…..

There is probably a ton more of info on the subject, this is just the tip of the iceberg….

So, again, good luck with that…

Only people who don’t speak ASL would think in-air gesture recognition would read and interpret accurately. Please trust me when I tell you: That’s not gonna be enough.

I think this is excellent as a step forward in embracing the deaf community by Apple as always when other cellular phone companies are not advancing. Nothing is perfect. But perhaps in the future the recognition programming if achieved can be set to different sign language word banks for a message just like the iPhone’s currently are able to be set/selected for which language they will read in and speak in. It’s amazing just to realize the technology has come this far in its promise to continue moving forward.