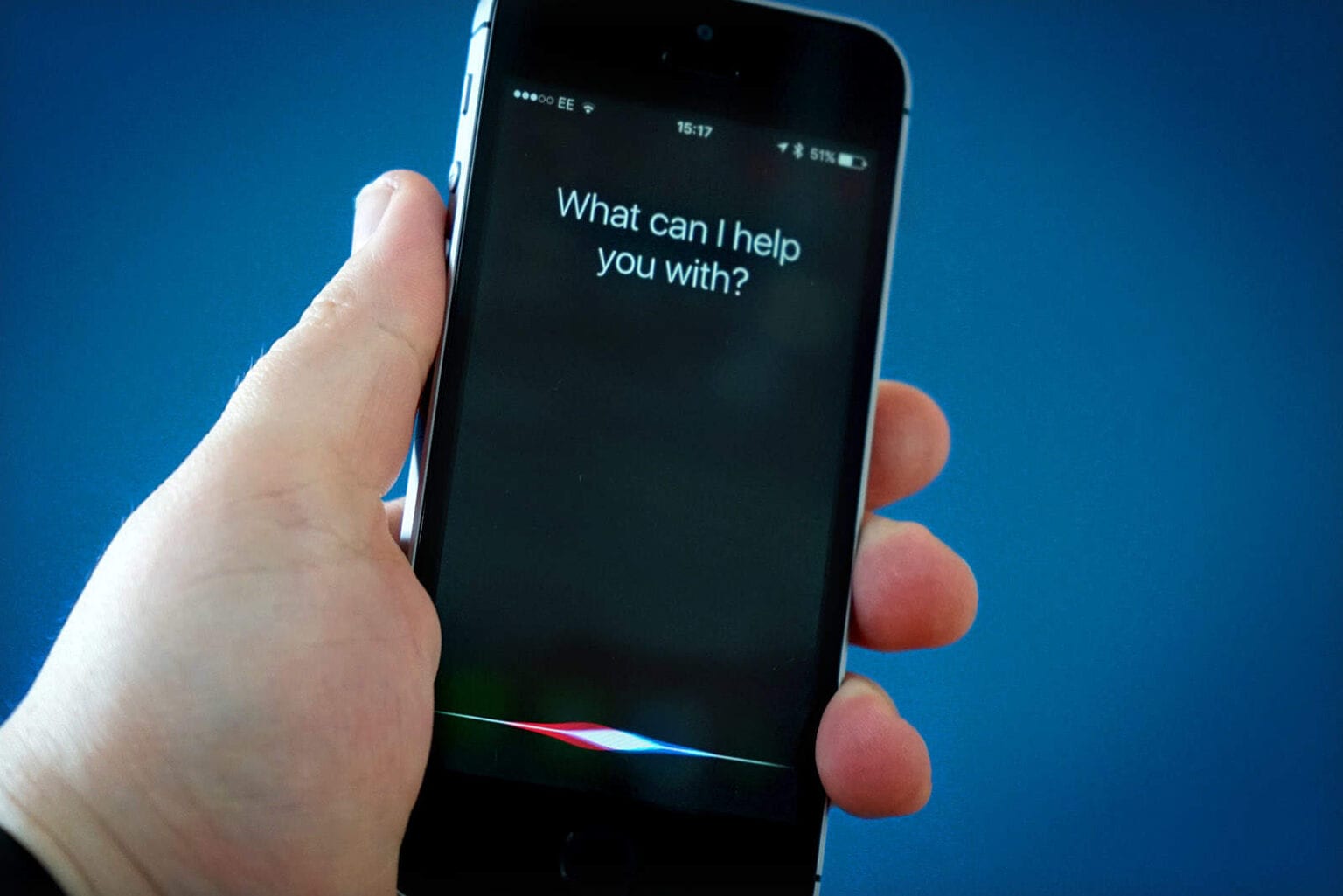

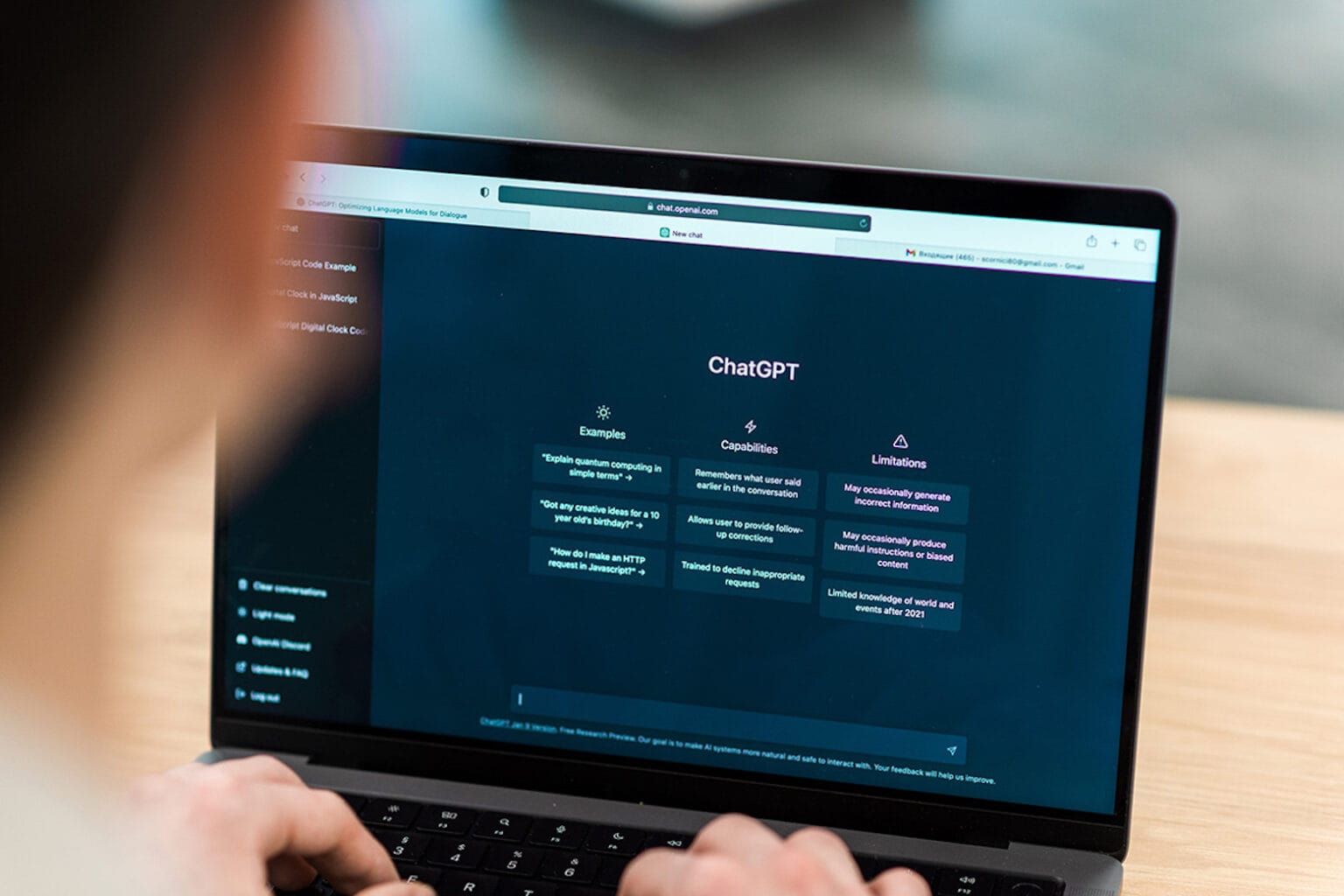

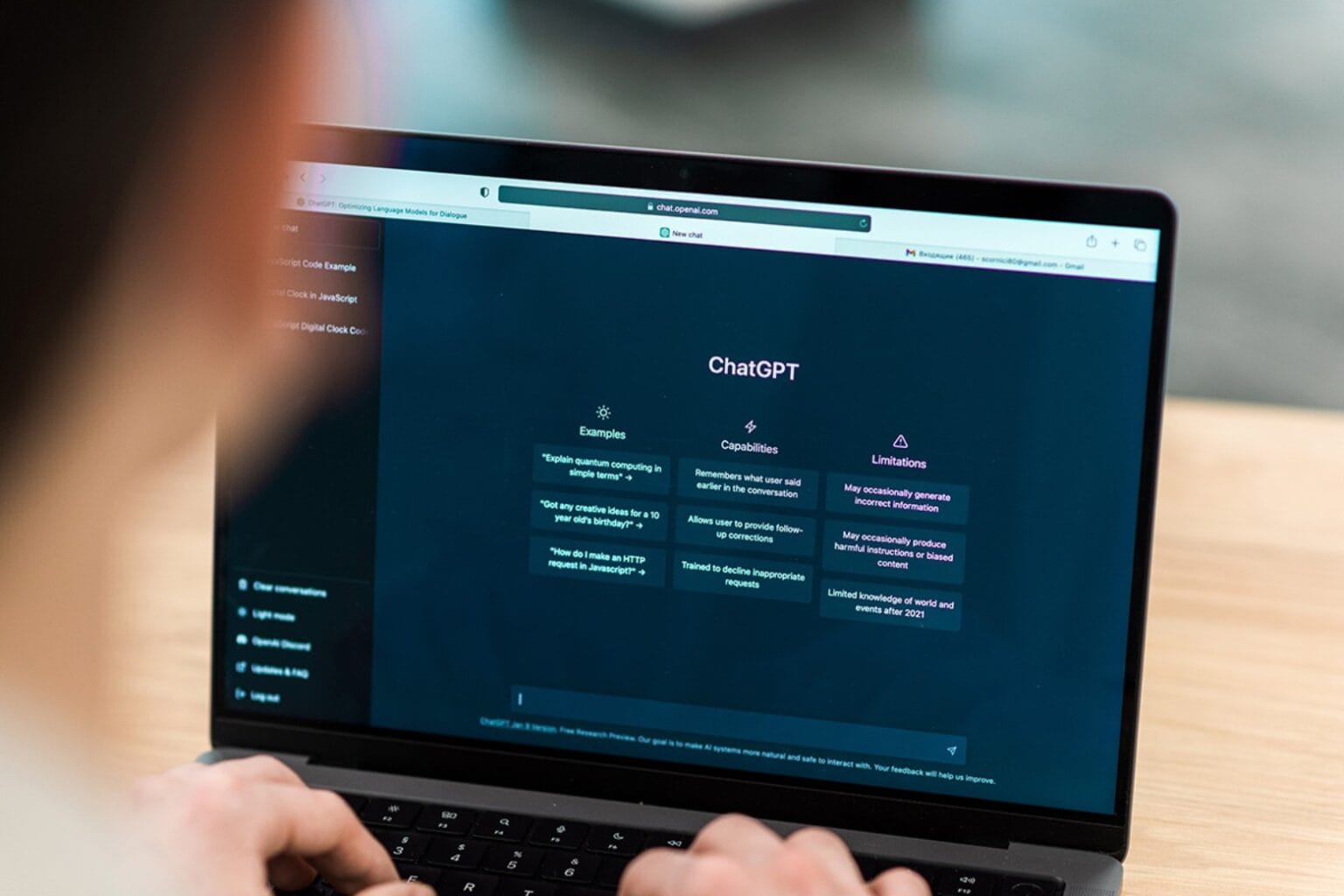

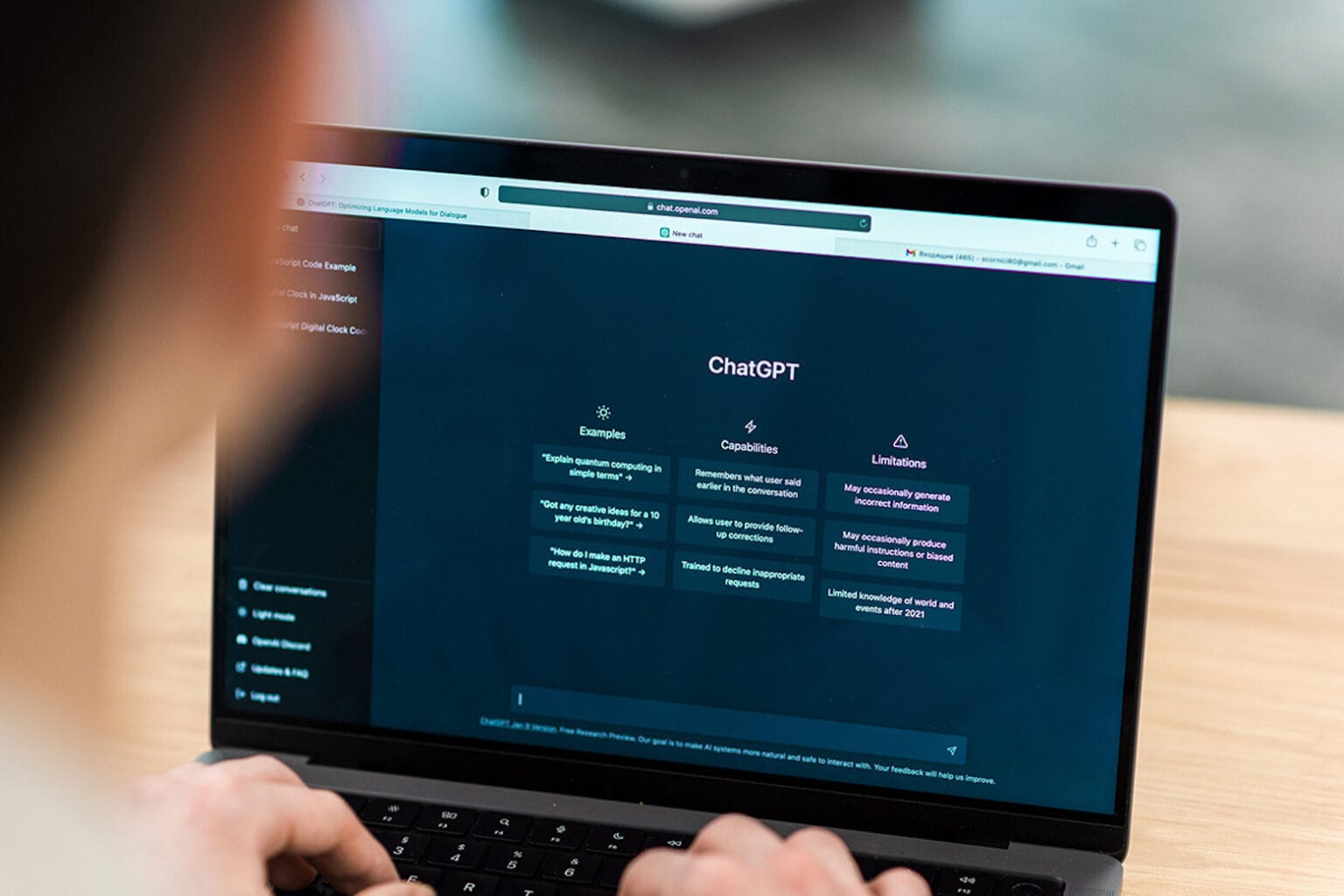

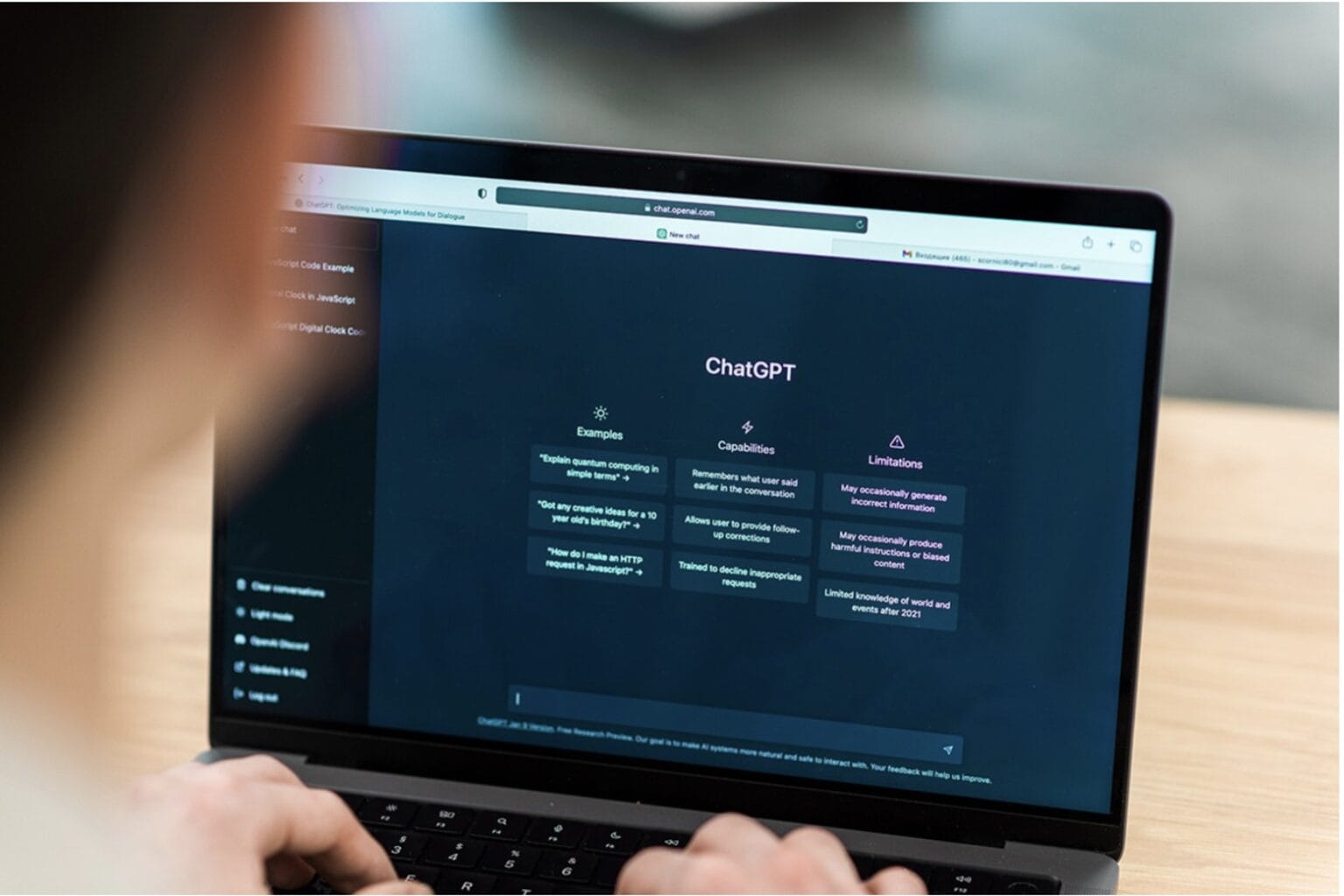

Apple’s new Ferret-UI multimodal large language model could help artificial intelligence systems better understand mobile screens like the one on your iPhone, according to a research paper released Tuesday.

Among those potentially benefitting from this? Perhaps the much-maligned Siri voice assistant will do more for you on mobile devices. And maybe visually impaired users and developers who need to do user interface testing will benefit, too.

![Big changes coming to iPhone battery and camera [The CultCast] The CultCast Episode 604: An iPhone battery rumor gets us excited.](https://www.cultofmac.com/wp-content/uploads/2023/07/CultCast-604-iPhone-battery-rumor-1536x864.jpg)