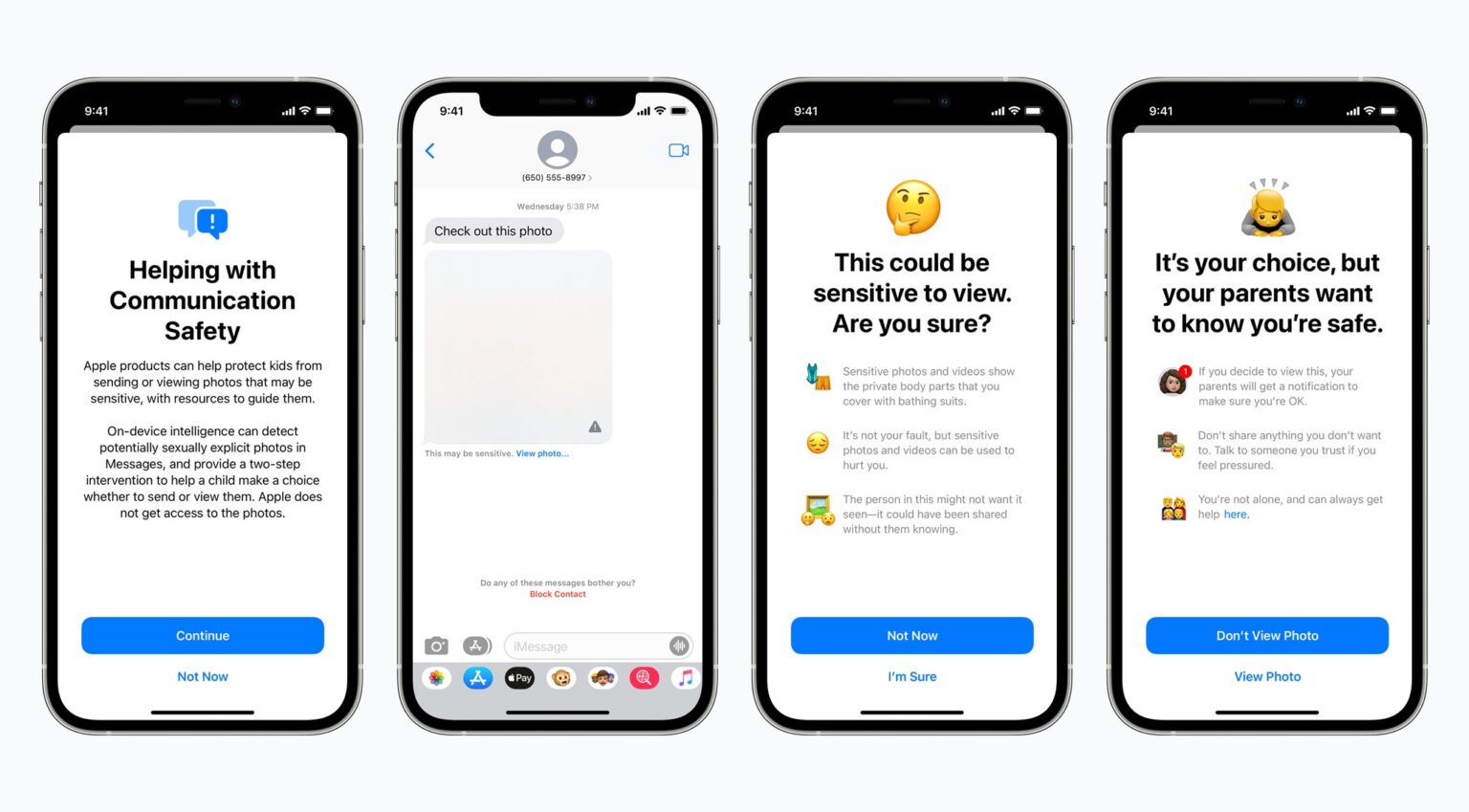

Apple will soon offer a new tool to protect children from sexual predators. The Messages application will be able to detect if an iPhone or iPad user gets or sends sexually explicit photos.

The process will use on-device machine learning so that Apple does not have access to the images.

Kids and parents warned of sexually explicit photos

If an child receives a nude photo in the Messages application on an iPhone or iPad, the image will be blurred out. If the child tries to view it, they will receive an alert that asks, “Are you sure?”

The warning is phrased so a child can understand. It says, in part, “Sensitive photos and videos show the private body parts that you cover with bathing suits.“

A similar process happens if a child attempts to send sexually explicit photos. The child will be warned before the photo is sent.

Part of a larger effort to fight child porn

Apple also announced Thursday that it will scan images stored in iCloud Photos for content that depicts sexually explicit activities involving a child. These will then be reported to the National Center for Missing and Exploited Children.

Also, Siri and Search are also being updated to intervene when users perform searches related to child sexual abuse.

Apple says these features are coming later in 2021 in updates to iOS 15, iPadOS 15, watchOS 8, and macOS Monterey. For more information, visit Apple’s just launched page devoted to its expanded protections for children.