Apple is making a new push into artificial intelligence, giving developers access to the company’s neural network technology in a move that should mean big things for apps you’ll use in the future.

While opening up Siri to third-party developers was the most attention-grabbing news coming out of yesterday’s Worldwide Developers Conference keynote, Apple has also revealed that it is will allow developers to tap into the company’s artificial neural network technology. And once the dust is settled, this could turn out to be the biggest development of WWDC, bar none!

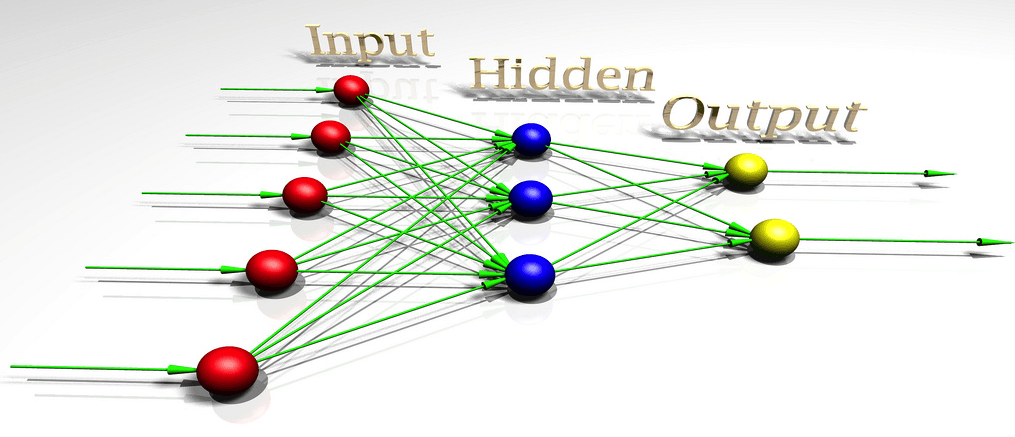

Whether it’s machines that can recognize photos, understand speech or drive a car, neural nets are almost certainly behind any breakthroughs you’ve read about recently.

As Apple explains in accompanying notes about its new neural network API:

“The Accelerate framework’s new basic neural network subroutines (BNNS) is a collection of functions that you can use to construct neural networks. It is supported on OS X, iOS, tvOS, and watchOS, and is optimized for all CPUs supported on those platforms.

BNNS supports implementation and operation of neural networks for inference, using input data previously derived from training. BNNS does not do training, however. Its purpose is to provide very high performance inference on already trained neural networks.”

By most accounts, Apple is well behind rivals like Facebook and Google when it comes to AI research. While that’s not going to change overnight, opening up some of its tools to developers is certainly a positive move. And, hey, if it means that we all get smarter apps in the meantime, all the better!

Now we just have to wait and see what developers will do with it …