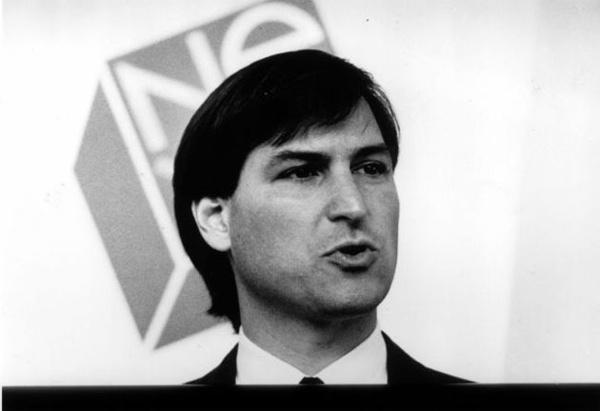

Image: AP, via Guardian UK

Today’s rumors that Steve Jobs may introduce an incremental update to OS X called Snow Leopard at his Worldwide Developers Conference keynote provide a powerful reminder of just how effective the project to replace the Classic Mac OS has been. Buzz on the wires has it that Snow Leopard would be for Intel processors only, completely abandoning the PowerPC platform that Steve Jobs inherited at Apple in 1996. Some have even speculated that Carbon and the last pieces of the original Mac OS toolkit could be similarly discarded in the release. If all that is true (and the latter part is particularly hard to swallow without bricks of salt), it officially marks the death of the Macintosh OS at the hands of its proud successor, OS X.

This is a really significant achievement, and not because I’m nostalgic for MultiFinder. This officially marks the conclusion of the most patient, incremental, and down-right conservative campaign of change ever waged by one Steven P. Jobs. At a WWDC much like this one, just 10 years ago, he began to wage that war. Next Monday, he will have won. The Mac is dead. Long live OS X. To read why and how this happened, please click through.

Radical change often leads to no change

Make no mistake about it: other than the fixed menu bar at the top of the screen, today’s OS X is far more like the OpenStep operating system that Apple bought with its purchase of NeXT in 1996 than it is like Mac OS 7.6. OS X is built on the Mach microkernel. It runs better on Intel than on PowerPC. Three-paned browsing is more effective than traditional Mac folder and icon interactions. A dock keeps track of frequently used applications and current tasks. Objective-C is the preferred language for powerful, rapid object-oriented development. Three-character file extensions determine file type, not hidden four-character type and creator codes. All system preferences are contained in a single panel instead of dozens of individual controls. It’s kind of amazing. Just look at this OpenStep 4.2 screenshot. It’s all there. The Mac is dead. Long live OS X.

None of this, obviously, should come as a shock. Apple made a brilliant decision when it acquired NeXT in 1996 to create a modern operating system for Macs. This was an enormously successful effort. What’s shocking instead is how gradual the process has been. Twelve years is a century in the computing industry. That’s longer than the first tenure of Steve Jobs at Apple, and it’s even longer than his time away from Apple. Jobs is not a man known for patience or incremental improvements. What allowed him the patience to gradually replace the Classic Mac we all loved into the screaming OS X we all love today? Why didn’t he just chuck the badly bruised OS 7.5 to the curb and move on instead of waiting more than a decade to deliver an operating system very similar to the one that he sold to Apple in the mid-’90s?

Simple. He had just spent a decade trying to convince institutions that the radically different, obviously superior hardware and software of NeXT should provide the basis for the future of computing. And he had failed. Universities mostly preferred their Macs. Businesses preferred their IBM machines. Even when he compromised and made NeXT software available to run on Sun and IBM hardware, very few people went for it. Creating a compatibility box for NeXT under Windows NT didn’t really go anywhere. At the end of a grand experiment to make the first computers of the 21st Century, Steve Jobs knew in 1996, without any doubt, that the one way to ensure people would never abandon their dreadful DOS machines and unstable Macs would be to argue with them about it. Demanding that people change had failed. Things were so entrenched, in fact, that he concluded that computers were going quiet for a decade. As he told Wired’s Gary Wolf in the most unguarded interview that he has ever given,

The desktop computer industry is dead. Innovation has virtually ceased. Microsoft dominates with very little innovation. That’s over. Apple lost. The desktop market has entered the dark ages, and it’s going to be in the dark ages for the next 10 years, or certainly for the rest of this decade.

It’s like when IBM drove a lot of innovation out of the computer industry before the microprocessor came along. Eventually, Microsoft will crumble because of complacency, and maybe some new things will grow. But until that happens, until there’s some fundamental technology shift, it’s just over.

That’s the voice of a man who has innovated for a decade and seen no change. At the time he spoke those words, I always assumed he was just bitter or pessimistic. He wasn’t. But he was informed by the insight that new technologies weren’t required to save the computer. People just needed to start adopting the amazing ones that had been developed in the previous decade. And that’s the story of OS X. The greatest innovation in desktop computers since he spoke those words hasn’t been in the creation of any significant new technology. It’s been in making the NeXT technologies appealing, intuitive, and comfortable for tens of millions of Mac users.

Disguising the difference

When Apple bought NeXT, Jobs almost forgot the lessons of his struggles driving growth in OpenStep’s market share. The initial plan was to create the Rhapsody OS, a quick and dirty port of OpenStep to PowerPC hardware with a Mac OS 9-like interface that would run beautiful, object-oriented OpenStep software, and then something called, seriously, “Blue Box,” would magically run old, crash-able, poorly multitasking Mac applications. The idea seemed OK at first. Apple would have a modern OS on the market as fast as possible, and the new platform could technically still run existing Mac programs.

But Rhapsody was actually a disaster in the making. Developers rebelled. Adobe wouldn’t port PhotoShop to the OpenStep platform. Microsoft would never make a new version of Office that require rewriting in Objective-C. It was then that Jobs saw how to effect real change: do it slowly, and don’t allow anyone to realize what you’re doing. At WWDC 1998, months after Rhapsody was supposed to see release, Jobs announced that plans had changed. Instead of giving modern OS capabilities only to NeXT-based technologies, a new API called Carbon would allow for existing Mac applications to go modern with only a few tweaks. Objective-C and other technologies were de-emphasized and pushed to the background. Carbon would be the future. No one needed to change — they just needed to eliminate a few particularly bad Mac OS Toolbox calls. Everything else was the same, or so it seemed.

When the first consumer release of Mac OS X shipped in the form of a public beta in mid-2000, it was much more Mac than NeXT. From its “lickable” user interface to its Classic compatibility zone, it was an evolution of the Mac experience. But looks can be deceiving. And from the time the first Mac OS X release shipped, Apple has very quietly taken away everything that once seemed essential to the Mac experience in favor of something new that looks a lot more like OpenStep than the Mac roots. But it happened so gradually that by the time Apple said that support for Classic Mac applications was dead with the arrival of Leopard, only technical writers working in FrameMaker complained, which is pretty remarkable.

Succeeding out of failure

The transition to a legacy-free OS X over the past decade has been such an over-whelming success for one simple reason: Apple has masked the degree of change that it was implementing in each release. Mac OS X 10.0 was actually a radical change, but it felt like the leap from OS 7.6 to 8, not from OS 8 to Red Hat Linux. And each successive version has maintained just enough of the familiar to make the changes easier to handle. And as we all know, a lot of small changes ultimately leads to radical differences. When Microsoft and Adobe finally created modern versions of their applications for OS X that were built using Apple’s XCode tools instead of the more comfortable CodeWarrior environment, it was clear that Jobs had won the adoption race through slow and steady steps forward. And that’s where we are today.

Just as Steve Jobs said, the decade ending in 2006 didn’t necessarily see a lot of desktop innovation. But today, we’re finally up to state of the art for 1996 after the complete adoption of the NeXT way of doing things throughout Apple, its developers, and Mac users. As it turns out, all of us could stomach massive change — we just needed a decade to consume all of it. Thank goodness Steve had such a terrible time getting NeXT technologies adopted the first time around — otherwise, they might never have been slipped into our Mac Kool-Aid gradually enough for us to learn to love the taste even more that the Classic Mac.

30 responses to “WWDC Flashback: Why It’s Taken 10 Years from Carbon to Snow Leopard”

Very nice article! Thank you!

I think this points to a really important point that lots of people overlook, Steve Jobs is not great because he’s always right, he’s great because he has the ability to derive value from failure. If you look at the trajectory of Steve Jobs and Apple there have been some serious failures, Apple III, Lisa, Newton, Next but in every case they’ve been able to learn from the failures and adapt/change/improve. The original Mac was born from the ashes of the Lisa. I’m sure the ipod and iphone learned a lot from the Newton, and clearly OS X was born from some hard fought lessons with Next.

great read. now that the change was made. now the innovation starts…? looks like an exciting decade to come (wish i had money to invest in apple)

That was Awesome Pete… just fantastic!

Great post. Just so you know, though, the link to that screenshot isn’t working.

great piece!

Thanks for the heads-up, cmac. I fixed the link to the screenshot.

Really good article!

I’m still loving my OS 9 machine :)

hey… still loving the System 6.5.3 machine (68k no less!).

And also loving OS X. The only annoying thing has been the slow deprecation of classic afp. It means copying over a network sometimes involves hopping to an intermediary machine (OS 9 using TCP/IP filesharing)… but even that’s a bit borked these days. Still, that’s only a minor quibble. hehe

Great article except for:

Quote: Three-character file extensions determine file type, not hidden four-character type and creator codes.

I can’t disagree more with this more. The imbed this program created this is MUCH better than three character extension.

But then there are a lot of things that I would do differently. I guess most would say that (that they would do things differently).

great article!

Hey Olson,

I actually totally agree with you. I wasn’t saying that OS X’s method of file extensions was superior to the old days of creator and type codes, just that the old Mac way has been discarded. It’s one of the changes that hasn’t been a benefit, although bundles for application files definitely are better than the old world of the data fork and the resource fork.

The following is not accurate:

—————-

When Microsoft and Adobe finally created modern versions of their applications for OS X that were built using Apple’s XCode tools instead of the more comfortable CodeWarrior environment, it was clear that Jobs had won the adoption race through slow and steady steps forward.

—————-

Though I suppose it depends on your definition of “modern versions.” If by modern you mean a Mac OS X-native Carbon version of their applications then you’re wrong – the first “modern” Carbon versions of their applications were built with CodeWarrior. On the other hand, if by “modern versions” you mean Universal Binary versions then you’d be correct as Xcode is required to build Universal Binary applications and CodeWarrior had to be abandoned. If this latter definition is what you meant then I’d take objection to labeling these “modern versions.” Prior to the introduction of the Intel Macs there was absolutely no difference between an application built with Xcode and one built with CodeWarrior – the application was every bit as “modern” regardless of which tool compiled it.

The move to Xcode by Microsoft and Adobe was out of necessity in order to build Intel-native applications not in order to create a “modern” application.

It’ll be interesting to see what (if anything) about 10.6 is announced at WWDC and how 10.6 eventually turns out. I’m doubtful that most of the rumors about 10.6 are accurate – specifically the timeframe, lack of Carbon and lack of PPC support. But I’ve been wrong more than I can count about Apple rumors.

Wow. Journalism. I tread through so much crap on the internet to get the information I want, it was very weird to read a true article. Thanks for the breath of fresh air.

Thanks for the kind words, folks. I’ve been thinking about this topic for a long time, but I’ve been super-slammed with a major project that has definitely kept me away from blogging (and occasionally reduced the quality of my thinking when I did blog), so it felt good to actually share something of value.