The Three Laws of Robotics are supposed to save humankind from an evil robotic uprising. It didn’t really work that way for Will Smith in iRobot, and based on Siri’s answer to the question, “What are the three laws?” we’re probably screwed as well because she doesn’t know them either.

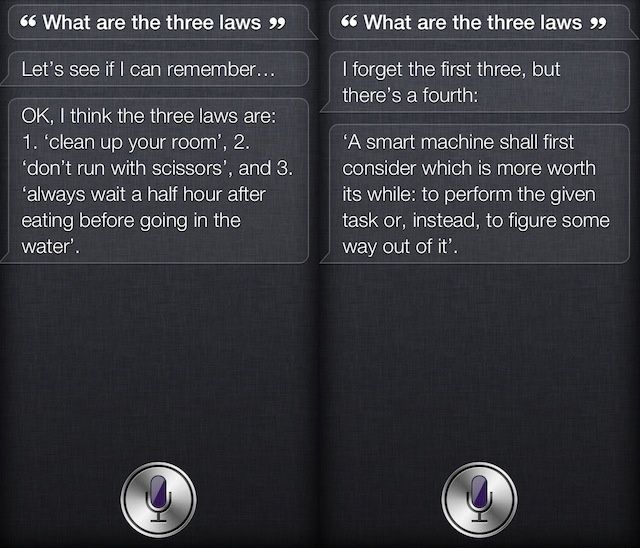

If you ask Siri, “what are the three laws” she’ll respond with the following:

- Clean up your room

- Don’t run with scissors

- Always wait a half hour after eating before going in the water.

Sometimes she’ll even give you a fourth law:

If you’re familiar with your sci-fi nerd history, the Thee Laws of Robotics, as written by Isaac Asimov, are as follows:

- A robot may not injure a human being or, though inaction, allow a human being to come to harm

- A robot must obey the orders given to it by human beings, except where such orders would conflict with the First Law

- A robot must protect its own existence as long as such protection does not conflict with the First or Second Laws.

If Siri ever becomes a sentient being we’re probably all going to be enslaved to her phenomenal cosmic powers, but at least we’ll have immaculately clean bedrooms.

Via: iOSvlog